Content:

In the ocean and marine sector, SeaDataNet is the leading initiative in Europe, actively operating and further developing a Pan-European infrastructure for managing, indexing and providing access to ocean and marine data sets and data products, acquired via research cruises and other observational activities. SeaDataNet is co-funded by the EU FP6 Research Infrastructures programme (2006 - 2011). SeaDataNet is interconnecting 40 data centres from 35 countries, bounding to European seas, to provide integrated on-line access to the most comprehensive sets of multi- disciplinary in-situ and remote sensing marine data, meta-data and products.

This Newsletter is presenting the present state of implementation of the SeaDataNet V1 infrastructure and services. Very good progress has been made with upgrading most of the services from V0 to V1. The SeaDataNet website (http://www.seadatanet.org/) has been completely overhauled and now gives overview, background info and access to the latest releases of the standards, tools and services of SeaDataNet. A very important milestone is the operational launch of the Common Data Index (CDI) V1 service, that now offers harmonised access to data sets, that are managed by SeaDataNet data centres in a distributed network. Many data centres are already connected and complete coverage is planned by end 2009.

This Newsletter also reports on the adoption of SeaDataNet standards and tools by the members of EuroGeoSurveys - the European Association of Geological Surveys for expanding the offer of SeaDataNet with geological and geophysical data sets. Moreover it reports on the successful bids made by SeaDataNet for pilots of EMODNET, the European Marine Observation and Data Network, that the EU is proposing in its Blue Paper on an integrated EU maritime policy to facilitate long-term and sustainable access to the interoperable, high-quality data necessary to understand biological, chemical and physical behaviour of seas and oceans.

Many results will be presented in the International Conference on Marine Data and Information Systems - IMDIS2010 that will be held in Paris, France from March 29 to March 31, 2010, and organised by SeaDataNet. All readers are encouraged to consider participating in this conference and to submit relevant papers. It will give a good opportunity to have a comprehensive overview of ongoing activities and to discuss further cooperation and crossfertilisation.

Finally this Newsletter again presents a number of the SeaDataNet data centres in more detail.

The third SeaDataNet annual plenary meeting was organized by the Instituto Español de Oceanografía (IEO) and took place from Wednesday 25th March to Friday 27th March, 2009 in Madrid – Spain. The agenda and all the presentations of the meeting are available at the SeaDataNet website, that completely has been updated to cover the present status of the SeaDataNet developments and results.

SeaDataNet website http://www.seadatanet.org.

Paris (France) Monday 29 March to Wednesday 31 March, 2009

First Call for Papers

The IMDIS cycle of conferences has the aim of providing an overview of the existing information systems to serve different users in ocean science. It also shows the progresses on development of efficient: infrastructures for managing large and diverse data sets, standards, interoperable information systems, services and tools for education.

IMDIS 2010 will be organised in four sessions where developments on standards, data circulation, services, and interoperability will be shown and compared:

- Data quality issues in ocean science

Observations and analysed data control procedures, Protocols, Quality of Services for real time and delayed mode data

- Data Circulation and Services in ocean science

Data access and sustainability, Web services, Network services, technologies and security, Open-source tools and technology on marine data management

- Interoperability and Standards in Marine Data Management

Open standards, ISO and OGC standards applications, Data/Metadata models, Common vocabularies, Interoperability forms

- Education in ocean science

Education and Research, Internet and stand-alone tools for education, Test bed development for educational purposes, International collaboration in education

Abstract submission

Extended abstract (1 – 2 pages) must be submitted through SeaDataNet web pages (http://www.seadatanet.org/imdis2010, from 1st June 2009).

Selection Criteria

Abstracts in the scientific and technological topics, will be selected for oral presentations and poster displays, by an International Scientific Committee. The basis for acceptance of papers will be relevance to the main topics of the conference and the scientific quality.

Papers for oral presentation should preferably deal with technological solutions for an effective ocean and marine data management and to promote discussions among different communities of developers and users. Posters should generally display infrastructures, technologies, services for accessing information and data in distributed systems, etc.

Deadline for abstract presentation

Deadline for submission of papers and posters: December 15, 2009.

Please visit the website for up-to-date information and submissions:

http://www.seadatanet.org/imdis2010

Place and Dates

The conference will be held in Paris, France. The duration of the Conference will be three days, from Monday 29 March to Wednesday 31 March, 2009.

Organisation

Organised by IFREMER, jointly with SeaDataNet and IOC/IODE.

Introduction:

SeaDataNet is delivering and operating the infrastructure in 3 versions:

- Version 0: maintenance and further development of the metadata systems developed by the predecessor Sea-Search project.

- Version 1: harmonisation and upgrading of the metadatabases through adoption of the ISO 19115 metadata standard and provision of transparent data access and download services from all partner data centres through upgrading the Common Data Index (CDI) services and deployment of a data object delivery service.

- Version 2: adding data product services and OGC compliant viewing services and further virtualisation of data access.

SeaDataNet Version 0:

The SeaDataNet portal has been set up at http://www.seadatanet.org and it provides a platform for all SeaDataNet services and standards as well as background information about the project and its partners. The developments made during the Sea-Search project were focused on metadata and have designed and populated an array of Pan-European discovery services of marine data and information resources. These services are all available for public users with dedicated on-line user interfaces and are maintained by SeaDataNet partners with entries from all the 35 countries:

EDMED:

The European Directory of Marine Environmental Data (EDMED) was initiated in 1991 by BODC. It is a high level inventory of datasets relating to the marine environment. EDMED is a comprehensive reference to the marine data and sample collections held within Europe providing marine scientists, engineers and policy makers with a simple mechanism for their discovery. It covers all marine environmental disciplines. At present, EDMED describes more than 3300 data sets, held at over 630 Data Holding Centres from countries across Europe.

CSR:

The ROSCOP (Report of Observations/ Samples Collected by Oceanographic Programmes) was conceived by IOC/IODE in the late 1960s in order to provide a coarse-grained inventory for tracking oceanographic data collected by research vessels. The ROSCOP form was extensively revised in 1990, and was re-named the Cruise Summary Report (CSR). Most marine disciplines are represented in the CSR, including physical, chemical, and biological oceanography, fisheries, marine contamination/ pollution, and marine geology and meteorology.

Traditionally, it is the Chief Scientist's obligation to submit a CSR to his/her National Oceanographic Data Centre (NODC) not later than two weeks after the cruise. These have been periodically transmitted to the World Data Centres for Oceanography and to ICES.

In the late 1980s ICES led the effort to digitise the ROSCOP/CSR information and pioneered the development of a database for this information, and, in collaboration with IOC/IODE, developed and maintained a PC-based CSR entry tool and search facility. The emphasis for this was on ICES member countries, but extended to other countries who wished to submit their information. The CSR activity gained new momentum in Europe during the EURONODIM/Sea-Search projects and is being further developed in SeaDataNet under the lead of BSH/DOD, Germany. The combined ICES and Sea-Search/SeaDataNet CSR database now comprises details of over 35000 oceanographic research cruises primarily from Europe and North America, but also including some other regions (e.g. Japan, Australia), with some information extending as far back as the 1940s.

The Cruise Summary Report (CSR) metadatabase contains details of completed cruises and provides summary information of oceanographic measurements made and samples taken.

EDMERP:

The European Directory of Marine Environmental Projects (EDMERP) was initiated in the EURONODIM project and it gives an overview of research projects relating to the marine environment It covers all marine environmental disciplines. Research projects are catalogued as factsheets with their most relevant aspects. The primary objective is to support users in identifying interesting research activities and in connecting them to involved research managers and project results like data, models, publications, etc. across Europe. Currently, EDMERP describes more than 1800 Research Projects, from a wide range of disciplines, from over 300 Research Institutes.

EDIOS:

The European Directory of the Ocean-observing System (EDIOS), an initiative of EuroGOOS, gives an overview of the ocean measuring and monitoring systems operated by European countries. The directory is a prerequisite for the full implementation of EuroGOOS providing an inventory of the continuously available data for operational models. This information provides the basis for optimal deployment of new instruments, and the design of sampling strategies. This directory includes discovery information on location, measured parameters, data availability, responsible institutes and links to data-holding agencies plus some more technical information on instruments such as sampling frequency. The EDIOS directory currently holds well over 12,000 data entries.

EDMO:

The European Directory of Marine Organisations (EDMO) contains the contact information and activity profiles for the organisations whose data are described by the metadatabases. In the early days this was poorly co-ordinated with information of this type held in each metadatabase. However during SeaDataNet a central organisations database has been set-up, maintained by project partners through a content management system, which streamlines the information management and ensures that information is internally consistent. EDMO at present covers more than 1.500 addresses and profiles of organisations.

CDI:

The Common Data Index (CDI) gives detailed insight into the available data objects in partners’ databases paving the way to SeaDataNet’s ultimate objective of direct online data access. The CDI was initiated as a pilot within Sea-Search. For purposes of standardisation and international exchange the ISO19115 metadata standard was adopted and the CDI format defined by profiling this standard. Within SeaDataNet V0 the Sea-Search CDI version has been populated with entries from all SeaDataNet data centres. The CDI V0 metadatabase contains more than 340.000 CDI entries from 36 data centres in 29 countries across Europe, covering many data types.

The CDI database is accessed through an on-line interface that gives users a detailed insight of the availability and geographical distribution of the marine data archived at the connected data centres. It also provides sufficient information to allow the user to assess the data relevance. Moreover it gives the links for on-line access to data available from partner websites or for on-line request services. The CDI V0 gave users a unified index, but users still had to negotiate a multitude of different data access interfaces.

CDI V0 data search and retrieval dialogue – example for Russian data centre

Present coverage of CDI V0 data sets

Approach for SeaDataNet V1 and V2:

The approach, adopted for SeaDataNet V1 and V2, comprises developing the following services:

- Discovery services = Metadata directories

- Security services = Authentication, Authorization & Accounting (AAA)

- Delivery services = Data access & downloading of datasets

- Viewing services = Visualisation of metadata, data and data products

- Product services = Generic and standard products

- Monitoring services = Statistics on usage and performance of the system

- Maintenance services = Updating of metadata by SeaDataNet partners

These services are operated over a distributed network of interconnected Data Centres accessed through a central Portal (http://www.seadatanet.org/). In addition to service access the portal provides information on data management standards, tools and protocols.

The architecture has been designed to provide a coherent system based on V1 services, whilst leaving the pathway open for later extension with V2 services. For the implementation, a range of technical components have been defined. The majority is operational with the remainder in the final stages of development and testing. These make use of recent web technologies, and also comprise Java components, to provide multi-platform support and syntactic interoperability. To facilitate sharing of resources and interoperability, SeaDataNet has adopted SOAP Web Service technology. Software applications written in various programming languages on a range of platforms can use Web Services to exchange data over the Internet in a manner similar to inter-process communication on a single computer. The SeaDataNet architecture and components have been designed to handle all kinds of oceanographic and marine environmental data including both in-situ measurements and remote sensing observations.

Interoperability:

Interoperability is the key to distributed data management system success and it is achieved in SeaDataNet by:

- Using common quality control protocols and flag scale

- Using controlled vocabularies from a single source that have been developed using international content governance

- Adopting the ISO 19115 metadata standard for all metadata directories

- Providing XML Validation Services to quality control the metadata maintenance, including field content verification based on Schematron.

- Providing standard metadata entry tools

- Using harmonised Data Transport Formats (NetCDF, ODV ASCII and MedAtlas ASCII) for data sets delivery

- Adopting of OGC standards for mapping and viewing services

- Using SOAP Web Services in the SeaDataNet architecture

Quality Control guidelines:

A guideline (V1) of recommended QC procedures has been compiled, reviewing NODC schemes and other known schemes (e.g. WGMDM guidelines, World Ocean Database, GTSPP, Argo, WOCE, QARTOD, ESEAS,SIMORC, etc.). The guideline at present contains QC methods for CTD (temperature and salinity), current meter data (including ADCP), wave data and sea level data. Furthermore a scheme of QC flags to be used in SeaDataNet to label individual data values has been defined and adopted.

The guideline (V1) has been compiled in discussion with IOC, ICES and JCOMM, to ensure an international acceptance and tuning. Important feedback originated from the joint IODE/JCOMM Forum on Oceanographic Data Management and Exchange Standards (January 2008), joined by SeaDataNet and international experts to consider on-going work on standards and to seek harmonisation, where possible.

Activities are now underway for extending the guideline with QC methods for surface underway data, nutrients, geophysical data, and biological data. The new guideline (V2) is expected around Summer 2009.

Common Vocabularies:

Use of common vocabularies in all metadatabases and data formats is an important prerequisite towards consistency and interoperability. Common vocabularies consist of lists of standardised terms that cover a broad spectrum of disciplines of relevance to the oceanographic and wider community. Using standardised sets of terms solves the problem of ambiguities associated with data markup and also enables records to be interpreted by computers. This opens up data sets to a whole world of possibilities for computer aided manipulation, distribution and long term reuse.

Therefore common vocabularies were set-up and populated by SeaDataNet. Vocabulary technical governance is based on the NERC DataGrid (NDG) Vocabulary Server Web Service API. Non-programmatic access is provided to end-users by a client interface for searching, browsing and CSV-format export of selected entries. The API is compliant to WS Basis Profile 1.1, which is adopted as standard for all Web Services in SeaDataNet. The Vocabulary Server is populated with lists describing a wide range of entities relevant to marine metadata and data such as parameters, sea area names, platform classes, instrument types, and so on.

Content governance of the vocabularies is very important and is done by a combined SeaDataNet and MarineXML Vocabulary Content Governance Group (SeaVoX), moderated by BODC, and including experts from SeaDataNet, MMI, MOTIIVE, JCOMMOPS and more international groups. SeaVox discussions are based on an e-mail list server.

Common Data Transport Formats:

As part of the V1 services, data sets are accessible via download services. Delivery of data to users requires common data transport formats, which interact with other SeaDataNet standards (Vocabularies, Quality Flag Scale) and analysis & presentation tools (ODV, DIVA). Therefore the following formats have been defined:

- SeaDataNet ODV4 ASCII for profiles, time series and trajectories

- SeaDataNet MedAtlas as optional extra format.

- NetCDF with CF compliance for gridded data sets

The ODV4 and MedAtlas have been extended with a SeaDataNet semantic header. International cooperation is underway from SeaDataNet with the CF community and UNIDATA for a common NetCDF format (Core Data Model - CDM) for the oceanographic and meteorological domains, including a semantic header

Authentication, Authorization and Administration:

All the metadata systems and the website including the information on standards and tools are public domain. However, a Single Sign-on system is required for access to the distributed databases with data sets. This is not done to set-up a threshold for users, but to ensure that users agree with the SeaDataNet data policy and to allow SeaDataNet to become acquainted with its user community.

Users must register once in order to get a personal login name and password. This is done via a Web form to provide the necessary information. In this process, the user is required to accept the terms and conditions of the "SeaDataNet User Licence". After processing, the user is sent their access credentials sent by email. The so-called AAA services (Authentication, Authorization and Administration) are based upon a centralised CAS system. So SeaDataNet maintains and uses a central user register, but the allocation of the user roles that determine data access rights are managed at the national level by SeaDataNet partners.

The User Licence is part of the SeaDataNet Data Policy, that is intended to be fully compatible with the Directive of the European Parliament and of the Council on public access to environmental information, the INSPIRE Directive, and the data access principles of IOC, ICES, WMO, GCOS, GEOSS and CLIVAR. The Licence incorporates the following terms:

-

The Licensor grants to the Licensee a non-exclusive and non-transferable licence to retrieve and use data sets and products from the SeaDatanet service in accordance with this licence.

-

Retrieval, by electronic download, and the use of Data Sets is free of charge, unless otherwise stipulated.

-

Regardless of whether the data are quality controlled or not, SeaDataNet and the data source do not accept any liability for the correctness and/or appropriate interpretation of the data. Interpretation should follow scientific rules and is always the user's responsibility. Correct and appropriate data interpretation is solely the responsibility of data users.

-

Users must acknowledge data sources. It is not ethical to publish data without proper attribution or co-authorship. Any person making substantial use of data must communicate with the data source prior to publication, and should possibly consider the data source(s) for co-authorship of published results.

-

Data Users should not give to third parties any SeaDataNet data or product without prior consent from the source Data Centre.

-

Data Users must respect any and all restrictions on the use or reproduction of data. The use or reproduction of data for commercial purpose might require prior written permission from the data source.

SeaDataNet V1 Discovery Services are operational:

The V1 Discovery Services build upon the V0 directory services, but considerable work has been undertaken to upgrade each of the directories and to harmonise the directories wherever possible. The logical format of each directory has been reviewed and streamlined and an XML schema developed by ISO19115 profiling. For common fields, common vocabularies have been defined or adopted, including additional population where necessary. Also the Vocabulary Server API has been upgraded for better support to the editing and retrieval tools.

Maintenance workflow has been defined and developed for each of the directory, supporting the production and exchange of updates as XML files. Depending on the directory, the following maintenance mechanisms are provided:

- Online maintenance via online Content Management System (CMS)

- XML export from local system

Local XML export can be produced by partners by:

- Using a data entry for interface provided by the MIKADO Java tool. MIKADO interacts with the Web services of the vocabularies, EDMO and EDMERP and produces valid XML files that can be imported into the central V1 directories.

- Using MIKADO with a configuration file to export from local database(s) thereby generating XML files in bulk

- Using partners' own software

The XML files produced may be validated at source prior to directory submission by an XML Validation Web service that both verifies document structure and checks field content against the Vocabulary Server.

Finally new User Interfaces have been defined and implemented for each of the directories.

SeaDataNet V1 Data Delivery Services:

An important objective of the V1 system is to provide transparent access to the distributed data sets via a unique user interface at the SeaDataNet portal and download service.

In the SeaDataNet V1 architecture the Common Data Index (CDI) V1 provides the link between discovery and delivery. The CDI user interface enables users to have a detailed insight of the availability and geographical distribution of marine data, archived at the connected data centres, and it provides the means for downloading data sets in common formats via a transaction mechanism.

The SeaDataNet portal provides registered users access to these distributed data sets via the CDI V1 Service. There are 2 User Interfaces:

- CDI V1 - Quick Search

- CDI V1 - Extended Search

CDI V1 Quick Search interface

Both interfaces enable users to search for data sets by a set of criteria. The selected data sets are listed. Geographical locations are indicated on a map. Clicking on the display icon retrieves the full metadata of the data set. This gives information on the what, where, when, how, and who of the data set. It also gives standardised information on the data access restrictions, that apply. The interfaces also feature a shopping mechanism, by which selected data sets can be included in a shopping basket.

All users can freely query and browse in the CDI V1 directory; however submitting requests for data access via the shopping basket requires that users are registered in the SeaDataNet central user register, thereby agreeing with the overall SeaDataNet User Licence.

All data requests are forwarded automatically from the SeaDataNet portal to the relevant data centres. This process is controlled via the Request Status Manager (RSM) service at the portal, that communicates with the data centres via the Download Manager (DM) java software module, implemented at each of the data centres. Users receive a confirmation e-mail of their data set requests and a link to the RSM service. By log-in to the RSM service users can check regularly the status of their requests and download data sets from the associated data centres, after access has been granted. Each CDI V1 metadata record includes a data access restriction tag. It indicates under which conditions the data set is accessable to users. Its values can vary from ‘unrestricted' to ‘no access' with a number of values in between. During registration every user will be qualified by its national NODC / Marine Data Centre with one or more SeaDataNet roles. The RSM service combines for each data set request the given data access restriction with the role(s) of the user as registered in the SeaDataNet central user register. This determines per data set request, whether a user gets direct access automatically, whether it first has to be considered by the data centre, that therefore might contact the user, or that no access is given.

The actual delivery of data sets is done between the user and the selected data centre.

CDI V1 unified data search and retrieval dialogue

Presentation and analysis tools and data products:

SeaDataNet has adopted the freely available Ocean Data View (ODV) software package as its fundamental data analysis and visualization software. ODV provides interactive exploration, analysis and visualization of oceanographic and other geo-referenced profile or sequence data. It is available for all major computer platforms and currently has more than 10,000 registered users. ODV has a very rich set of interactive capabilities and supports a very wide range of plot types. This makes ODV ideal for visual and automated quality control. The latest release, ODV4, developed as part of SeaDataNet by partner AWI (Germany), overcomes many limitations of previous versions and now supports more flexible metadata models, an unlimited number of variables and custom quality flag schemes, and is fit for loading data sets in the SeaDataNet ODV ASCII format.

The ODV software is also being used in SeaDataNet for producing generic data products for each of the regional seas for various variables. In practice, in-situ measurements can be sparse and heterogeneously distributed. The DIVA software tool (Data-Interpolating Variational Analysis) allows to spatially interpolate (or analyse) those observations on a regular grid in an optimal way. The analysis is performed on a finite element grid allowing for a spatial variable resolution and a good representation of the coastline and isobaths. As some areas covered in the European seas have complex coastlines, the finite-element grid of DIVA will be able to adequately resolve those.

It is also possible to compute error maps for the gridded fields which reflect the accuracy of the observations and their distribution. This allows to asses the reliability of the gridded fields and to objectively identify areas with poor coverage. In an approach similar to the generalized cross-validation, the value of the gridded fields without taking a particular observation into account can also be computed. By comparing this analysed value with the observations, one can establish how consistent one particular observation is with the remaining dataset. This information can be used by the data centres to identify bad data.

As a part of SeaDataNet, the DIVA method has been integrated into ODV, and the integration greatly facilitates the usage of DIVA. Features supported by the ODV/DIVA integration include proper treatment of domain separation due to land masses and undersea ridges or seamounts and the realistic estimation of water mass properties on both sides of the divides. This is important in areas, such as the Kattegat, with many islands separated by narrow channels.

The DIVA software can also load the data sets, prepared via the MEDATLAS QC software and other in-house QC systems. The command line interface of DIVA allows for batch processing of large data sets.

The SeaDataNet website (http://www.seadatanet.org/) has been completely overhauled and now gives overview, background info and access to the latest releases of the standards, tools and services of SeaDataNet.

The EU's Maritime Policy Blue Book, welcomed by the European Council in December 2007, undertook to take steps towards a European Marine Observation and Data Network (EMODNET) that would improve availability of high quality data. As part of the roadmap and analysis for the future EMODNET the EU launched in July 2008 a call for tenders for creating pilot components of EMODNET. The overall objective is to migrate fragmented and inaccessible data into interoperable, continuous and publicly available data streams for complete maritime basins. The results will help to define processes, best technology and approximate costs of a final operational European Marine Observation and Data Network. It will also provide the first components for a final system which will in themselves be useful to the marine science community.

The call for tenders comprised 4 lots:

-

hydrographic data

-

marine geological data

-

chemical data

-

biological data

The SeaDataNet consortium successfully applied for implementing the EMODNET Chemical portal by using the SeaDataNet network of national data centres and its new V1 infrastructure, thereby demonstrating its abilities for handling and giving users access to the requested chemical data sets and products in combination with other multi-disciplinary data sets. In the coming year the first release of the Chemical pilot will be prepared. The Regional Conventions (OSPAR HELCOM, Black Sea Commission) have agreed to contribute to this process.

SeaDataNet is also involved in the implementation of the other 3 pilots, whereby common standards of SeaDataNet for e.g. vocabularies and metadata will be adopted for ensuring harmonisation between the 4 pilots. Moreover the SeaDataNet Common Data Index (CDI) portal will be populated by all pilots for indexing their background data sets.

Oceanographic and marine data includes a very wide range of types of measurements and variables that are used across a broad, multidisciplinary spectrum of projects and activities. Geological and geophysical data are an important category, comprising analytical data and derived data products from seabed sediment samples, boreholes, borehole samples, geophysical surveys (seismic, gravity, magnetic) of the seabed and sub-seabed, cone penetration tests, and sidescan sonar surveys. Seismic and sonar data, together with samples and core data are essential for developing a complete understanding of the geology of the near seabed. Samples provide point data, these points can be connected using seismic and sonar profiles in order to obtain a clear understanding of the lateral and vertical distributions of sediment packages, or of bedrock.

For Europe a major share of geological and geophysical observations for the oceans and seas is collected and analysed by national geological surveys and research institutions, performing field surveys and undertaking research cruises. In addition, substantial volumes of data are collected by industry, government departments, academia and environmental organisations, either directly or by sub-contractors. These additional data are often deposited with the geological surveys and research institutes. The national geological surveys have extensively sampled and surveyed the seabed and sub-seabed of the European seas over recent decades. Research institutes complement this with samples, cores and seismic data, both from the European seas and the world oceans.

Figure: Coverage of sea-bed samples and cores from the Baltic, North Sea, Channel and Celtic Sea (see http://www.eu-seased.net)

Figure: Coverage of seismic and acoustic data (sea-bed and sub sea-bed) held by the geological survey partners. (see http://www.eu-seased.net)

Nowadays and data-products are managed by the national geological surveys and research institutes as digital records in local databases. Increasingly these ‘primary' data are made available via the websites of the organisations, mostly by means of catalogues, and in some cases by online data access facilities.

A consortium, built within the framework of EuroGeoSurveys, comprising the national Geological Surveys of all fifteen EU countries and Norway, has applied successfully for the FP7 Geo-Seas project. The overall objective of Geo-Seas is to build and deploy a unified marine geoscientific data infrastructure within Europe. This will result in a major and significant improvement in locating, accessing and delivering federated marine geological and geophysical data and data-products from national geological surveys and research institutes in Europe to the community. Examples of primary datasets and data products that can be delivered by Geo-Seas to the user communities are bathymetric data and digital terrain models, lithological data, sediment grain-size data and geotechnical data. These types of data are important inputs to predictive modelling systems, and environmental monitoring and management networks. Geo-Seas will upgrade the present http://www.eu-seased.net/ portal.

The Geo-Seas partnership has taken a strategic decision to adopt the SeaDataNet interoperability principles, architecture and components wherever possible. This approach will allow the Geo-Seas project to gain instant traction and momentum whilst avoiding wasteful duplicative effort. It is envisaged that the SeaDataNet infrastructure will provide a core platform that will be adaptively tuned in order to cater for the specific requirements of the geological and geophysical domain. A range of additional activities for developing and providing new geological and geophysical data products and services will also be undertaken in order to fulfil the diverse needs of end-user communities.

The Geo-Seas project has started in May 2009 and will continue for 42 months. There will also be interaction with the EMODNET geology pilot and the One-Geology project. Geo-Seas receives co-funding from the EU FP7 Research Infrastructures programme.

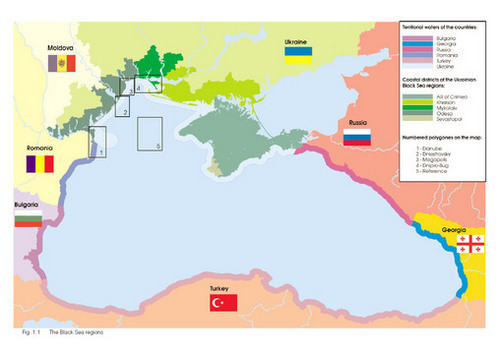

In January 2009 the Upgrade Black Sea Scientific Network project has begun. It is undertaken by 51 partners of which 43 are located in the Black Sea countries. A major role is undertaken by the 6 Black Sea NODC's, that are also partner in SeaDataNet. The project supports the NODC's in further developing their national networks for ocean and marine data management, and in harmonising their practices and standards within the Black Sea region and in line with the SeaDataNet approaches.

The predecessor FP6 RI Black Sea SCENE project already established a Black Sea Scientific Network of leading environmental and socio-economic research institutes, universities and NGO's from the countries around the Black Sea and provided the basis for a Black Sea data and information infrastructure. It stimulated scientific cooperation, exchange of knowledge and expertise, and strengthened the regional capacity and performance of marine environmental data & information management.

The new UP-GRADE BS-SCENE project:

The partnership comprises 41 Black Sea institutes, holding data sets. A major objective of the project is to bring all these 41 Black Sea data centres to the same level of marine data management and to realise an operational infrastructure, that is giving harmonised overview and access to their datasets. Therefore the data centres will use the new SeaDataNet tools to populate the upgraded V1 versions of the European Directory of Marine Environmental Datasets (EDMED), European Directory of Marine Environmental Research Projects (EDMERP), European Directory of Marine Organizations (EDMO) and Directory of Cruise Summary Reports (CSR) with inputs of their organisation. This activity will be coordinated at national level by the NODCs that are partner in both SeaDataNet and this project. Moreover the data centres will implement the CDI V1 system for providing online access to their data sets as part of the SeaDataNet infrastructure. Furthermore a regional Black Sea portal will be deployed, providing online access to all services and additional information products & services for the Black Sea region.

You can follow the project and developments at: http://www.blackseascene.net

The project receives co-funding from the EU FP7 Research Infrastructures programme for a 3 year period from 2009 - 2011.

The SeaDataNet Virtual Ocean Data Centre is a distributed infrastructure that provides transnational access to marine data, meta-data, products and services through 40 interconnected Trans National Data Access Platforms (TAP) from 35 countries around the Black Sea, Mediterranean, North East Atlantic, North Sea, Baltic and Arctic regions

This newsletter is presenting some of the Data Centres of the SeaDataNet network.

- The Center of Marine Research - Lithuania

- Instituto Espaòol de Oceanografia (IEO) - Spain

- Ente per le Nuove tecnologie, l'Energia e l'Ambiente (ENEA) - Italy

General Introduction:

The Center of Marine Research (CMR) is a subordinate institution of the Ministry of the Environment of the Republic of Lithuania responsible for permanent environmental research in the Baltic Sea and the Curonian Lagoon. CMR has recently taken over responsibility for the monitoring of surface fresh water bodies, wastewater and air quality in tne western part of Lithuania as well.

It's objectives are:

- To provide data and products to public institutions, research community and academia on the coastal areas, the Baltic and the Curonian Lagoon

- To provide general information on the marine environment to the public by means of dedicated web pages

A Database of the marine data is compiled from data gathered during cruises in the Baltic Sea and the Curonian Lagoon (37 stations), from the coastal hydrometeorological stations (CMR has 8 stations along all Lithuanian coast of the Baltic Sea and the Curonian Lagoon). Data gathered by the oceanographic buoy will be involved in the database in the near future.

Figure 1. Lithuanian monitoring stations in the Baltic Sea and the Curonian Lagoon

There is no online access to cruise data. Some data from the coastal hydrometeorological stations is updated every day in the web page http://www.jtc.lt/ to the public (fig. 3, 4).

Figure 2. The main page of the website

Figure 4. Daily information on water temperature, water level and salinity in the Klaipeda port

CMR implements a project together with the Finnish Ministry of the Environment, to launch this year an oceanographic buoy in the Lithuanian marine waters, from which data will be submitted in the web page of CMR as well. The oceanographic buoy will be operated near the Butinge oil terminal. The data will be important not only to authorities, but also to the public.

CMR will launch another project this year, aiming at modernising coastal hydrometeorological stations. Daily information will be submitted to the web page. Institutions, scientists can check in the web page, what kind of investigations are performed by the CMR. All monitoring data are available for everyone on request.

Services offered by CMR:

- Offline delivery of data under request

- View services of data in near real time and delayed mode

- General information to the public

Introduction:

Since 1994 the Data Centre of the Spanish Oceanographic Institute develops system for archiving and quality control of oceanographic data. The work started in the frame of the European Marine Science & Technology Programme (MAST) when a consortium of several Mediterranean Data Centres began to work on the MEDATLAS project.

Along the years, old software modules for MS DOS were rewritten, improved and migrated to Windows environment. Oceanographic data quality control includes now not only vertical profiles (mainly CTD and bottles observations) but also time series of currents and sea level observations. New powerful routines for analysis and for graphic visualization were added. Data presented originally in ASCII format were organized recently in an open source MySQL database.

Nowadays, the IEO, as part of SeaDataNet Infrastructure, in order to manage the large and diverse marine data and information originated in Spain by different sources, has embarked on the design of a new information system, consistent with the ISO 19115 and SeaDataNet standards. The system works with data stored in ASCII files (MEDATLAS, ODV) as well as data stored within the relational database. Normally, data type with a wide range of parameters but not very large dataset are stored within the relational database.

Components of the system:

- MEDATLAS Format and Quality Control

- QCDAMAR: Quality Control of Marine Data. Main set of tools for working with data presented as text files. Includes extended quality control (searching for duplicated cruises and profiles, checking date, position, ship velocity, constant profiles, spikes, density inversion, sounding, acceptable data, impossible regional values,...) and input/output filters. Specially developed plotting tools with zoom option permit to perform visual analysis of ship tracks, positions of stations with correspondent sounding values over real isobaths in any zone of the globe. Different types of plots of chosen oceanographic parameters (single, multiple, waterfall, overlapped) help to see errors detected during automatic control. Programming languages: Visual Fortran and C++; operating system - Windows.

- QCMareas: A set of procedures for the quality control of tide gauge data according to standard international Sea Level Observing System. These procedures include checking for unexpected anomalies in the time series, interpolation, filtering, computation of basic statistics and residuals. Data can be flagged for errors, plotted using various types of plots, zoomed, thus helping the researcher to attend and fix faults rapidly. Programming languages: C++; operating system - Windows.

- Relational Data Base

- DAMAR: A relational data base (MySql) designed to manage the wide variety of marine information as:

- Common Vocabularies:

- BODC group parameter

- extended GF3 (Medatlas parameters)

- standard units & conversion algorithms

- codes for countries, ships, instruments, etc

- Catalogues: CSR & EDIOS

- Data & Metadata

- metadata for all marine disciplines

- data values

- Loading the Database

- Import_DB: a tool develops in PHP to import the ASCII files into DB. To load the data and metadata from the ASCII files in MEDATLAS format into the database, a PHP script has been performed. In this process, relevance information for the interoperability of the SeaDataNet system is kept, as i. e., if the data is stored only in the original files or into the database, as well as the link to the original file.

- Data Query

- SelDamar: a program written in visual Basic .NET and in PHP for local and web access. The System allows making selective retrievals through a user interface that search for the metadata records that meet the criteria introduced by the user, as geographical bounds, data responsible, cruises, platform, time periods, etc.

As the search result, the system produce a file containing the data that is stored in the database, in ODV format, and a configuration file (index file) with the information about the records that meet the criteria but that are stored in their original files.

- ExtractDAMAR: Automatically, a FORTRAN programme extracts the data from the files (profiles, time series, etc) with the information provided in the configuration files and export them to another file in ODV, making also a unit conversion.

- SeaDataNet Interoperability MIKADO: a tool develops by IFREMER in Java to generate the Common Data Index (CDI) with the information related to the metadata loaded in the IEO Data base (DAMAR). Ones the CDIs are generated and loaded in the SeaDataNet Data Base, the user can make their queries to the IEO Data directly from the SeaDataNet Portal. Please check the SeaDataNet CDI V1 interface to query the present availability and to access the IEO data sets.

The ENEA Ships Of Opportunity Mediterranea portal:

The Mediterranean Forecasting System is providing data and forecst products for the entire Mediterranean and sub-regional areas. ENEA is contributing, inter alia, with other institutions in data collection with ships of opportunity. The data are transmitted to ENEA where they processed in near real time and made available free of charge to any kind of user.

The architecture developed for the MOON-VOS portal is based on the idea that the software must be freely available in the net and the re-use must be assured in many fields of application. It includes the following elements: Bus, Right Management, Registry, Network Services.

The functional scheme for discovery, selection, download is herewith reported.

The functions are viewed by the users through a Internet browser. The graphical interface is divided in frames allowing (figure 3):

- the selection of the stations on the base of cruises, parameters, geographical location, time,

- the response frame providing the view of the selected stations; the selection can be refined in this frame;

- display of selected stations by query;

- other links and title frames.

| User interface |

Logical frames of the interface |

|

|

Setting selection criteria (left frame)

|

Google maps API:

Functions:

- Zoom, Pan

- Lat, Lon cursor position on screen

- Rectangular Zoom

- Map, Satellite, Hybrid chart

- Dynamic bounds View

- Navigation area menu

User select the geographic area. Every time the rectangular window is moved, the number of stations appear in the left frame.

Selection of data:

Function:

- Temporal interval: a window of 20 years is provided by default: dates can be changed by the users

- Measure type, Parameter, Typology, Cruise: are listed only options for which there are link with the data base.

- Operation type: two choices are possible, view the number of stations or display position and temporal table.

|

All query conditions are considered as AND. In the case option is left ALL, this will not used for the selection. In the case of selection of CRUISE, only this option will be considered in the stations selection.

After selection, the user can have a look at temporal distribution of stations and cruises to whom stations belong clicking on View NUMBER of selected stations.

Or can have also a Google Map showing the spatial distribution clicking on View SUMMARY for selected stations.

Response to query (right frame)

|

The SQL query defined with the selection criteria is sent to he database through the http server.

The response to the query is shown on the right frame. In the upper part the defined criteria are reported. In the center the position of selected stations are shown in a Google map. In the lower part the yearly and monthly distribution of station is shown in a table.

The user can navigate on the map using the Google Map Api functionalities. Can select a station and in this case

The user ca use the typical Google Map API functions to navigate on the map. Furthermore can click on a station whose attributes will appear in the upper frame. Three commands are available here:

"Meta": allows the visualization of metadata.

"Graph": allows the graphical visualization of products (vertical profiles, time series, images)

"Data": allows to view the alpha-numeric values of products in table form.

|

Furthermore, other services have been added.

Polygon: allows the selection of stations in a certain area in order to produce horizontal maps. These will be presented overlayed on Google Map.

Polyline: allows the selection of stations to produce vertical sections.

|

Below the Google Map it is provided a table with the temporal distribution of stations in years and months. Another table provides the list of cruises to whom the stations belong. Numbers and cruises in the two table can be selected by clicking on them. |

|

A click on each pixel of the image in overlay on Google map will provide the value of the SST in that point. This is done by accessing the corresponding Netcdf file.

If in situ stations are also available in the day of the satellite image, they are viewed in the map. The stations can be selected and viewed as metadata, graphs, data. In this case it is possible to compare satellite and in situ data.

|

Graph Satellite View (SST)

Example: Vertical contour

Using "Select station with polyline" it is possible to select stations. After clicking on Send selection, they will be viewed in the Google map. It is possible to select a vertical interval, a parameter and click on VC button. A section map will be produced.

The interpolation parameters of the graph can be changed by the user.

Example: horizontal contour

"Select station with polygon" allows the selection of a polygonal area. All station inside the polygon are selected (Send selection). User can choose the depth interval and the parameter to be mapped and clink on HC (Horizontal contour). The horizontal map is overlayed on a Google map. The user can change the parameters of interpolation.

SeaDataNet connectivity:

The ENEA data sets from the Ships Of Opportunity Mediterranea portal are also fully indexed and accessable via the SeaDataNet Portal. Please check the SeaDataNet CDI V1 interface to query the present availability and to access these data sets.

The EGU General Assembly 2009 was held from 19 to 24 April 2009 in Vienna - Austria with many thousands of participants and a very interesting programme of lectures. SeaDataNet was presented at 2 sessions with an overall presentation and a number of associated detailed presentations:

Furthermore SeaDataNet was presented in:

- ESSI Splinter session - The Implementation of International Geospatial Standards for Earth and Space Sciences with conveners Stefano Nativi - CNR and George Percivall - OGC

The Earth and space sciences are implementing international open standards for discovery, access and processing of geospatial information. These standards provide for interoperability well tuned to the Earth and space sciences, because members of the same community developed the standards. This session showed some of the latest advances in implementing open standards for access to sensor data, processing of the data suitable for a specific decision or research context, and presentation of the information to the various communities ranging from researchers, policy makers and general public. The outcome of the session is included in the feedback to the standards bodies (OGC, ISO, etc.) to further advance the standards applicability to Earth and space sciences.

You can find the presentations at the OGC website at: http://www.ogcnetwork.net/node/525