Content:

SeaDataNet (2006 - 2011), funded by the EU as an FP6 Research Infrastructures project, is actively operating and further developing a Pan-European infrastructure for managing, indexing and providing access to ocean and marine data sets and data products, acquired from research cruises and other observational activities in European marine waters and global oceans. It is undertaken by the National Oceanographic Data Centres (NODCs), and marine information services of major research institutes, from 35 coastal states bordering the European seas, and also includes Satellite Data Centres, expert modelling centres and the Intergovernmental Oceanographic Commission (IOC) of UNESCO, International Council for the Exploration of the Sea (ICES) and EU Joint Research Centre (EU-JRC) in its network.

SeaDataNet has focused, with success, on establishing common standards and on applying those standards for interconnecting the data centres enabling the provision of integrated online access to comprehensive sets of multi-disciplinary, in situ and remote sensing marine data, metadata and products. The SeaDataNet architecture has been designed as a multidisciplinary system from the beginning. It is able to support a wide variety of data types and to serve several sector communities. SeaDataNet is also actively sharing its technologies and expertise, spreading and expanding its approach, and building bridges to other well established infrastructures in the marine domain. This has resulted in adoption and an active role in related data management projects, for example, the FP7 projects Geo-Seas, Upgrade Black Sea SCENE and EUROFLEETS.

At EU level a number of initiatives have been launched for which SeaDataNet has qualified itself as an important infrastructure. There is the Marine Strategy Framework Directive (MSFD) that will be aided by an overarching European Marine Observation and Data Network (EMODNet). EMODNet will be a network of existing and developing European observation systems, linked by a data management structure covering all European coastal waters, shelf seas and surrounding ocean basins. It must facilitate long-term and sustainable access to the high-quality data necessary to understand the biological, chemical and physical behaviour of seas and oceans. EMODNet will underpin, and provide data to WISE-Marine, the marine component of the EEA's Shared Environmental Information System (SEIS). WISE-Marine is intended to fulfil the reporting obligations of the Marine Strategy Framework Directive and to inform the European public on indicators for Good Environmental Status of sea basins. EMODNet is coordinated at EU level with the INSPIRE directive and large-scale framework programmes on European and global scales (GMES and GEOSS), that urge access to, and exchange of, environmental data and information.

The SeaDataNet consortium and infrastructure has acquired a leading role in the further development and actual implementation of the EMODNet data management structure, including seeking practical interoperability solutions towards GMES, GEOSS, and WISE-Marine.

SeaDataNet has established a close cooperation with EuroGOOS, the association of national governmental agencies and research organisations committed to European-scale operational oceanography within the context of the intergovernmental Global Ocean Observing System, and the MyOcean consortium, that is actively implementing the GMES Marine Core Service, aiming at deploying pan-European capacity for Ocean Monitoring and Forecasting. The cooperation focuses on improving the availability of high quality and harmonised physical oceanography data sets in real-time and delayed mode, as long term archives, in support of operational oceanography. Recently a successful bid was made to implement the EMODNet Physics pilot portal in a cooperation between EuroGOOS, MyOcean and SeaDataNet. A comparable cooperation is underway with the satellite data community as part of the FP7 GENESI-DEC project to seek improved exchange of marine and ocean in situ and satellite data sets of use for various applications and users.

All these spin-off projects and active involvement in the EMODNet pilots contribute in practice in an expansion of the SeaDataNet infrastructure in number of connected data centres (progressing from now 50 to circa 90 data centres in the near future) and in volume of accessable data sets as well as in a finetuning of the SeaDataNet common standards to make them fit for a wider scope of marine and ocean data types such as marine chemistry, geological, geophysical and bathymetric data sets next to the classical oceanographic data types. Moreover the EMODNet pilots provide an excellent opportunity to engage and reach out to other external institutes, that receive information and training in the application of SeaDataNet practices. Finally the MSFD related projects provide an opportunity to discuss with policy and decision makers from EU and member states the future sustainability of the SeaDataNet infrastructure as a core element in the EMODNet data management infrastructure.

This Newsletter is presenting the present state of implementation of the SeaDataNet infrastructure and services, highlighting a number of key achievements. The Newsletter also gives information on the progress of related projects as mentioned above. Finally an outlook is given to the targets of that SeaDataNet II proposal that has been submitted to the EU for a seamless continuation and further development of the SeaDataNet infrastructure and its common standards. This proposal has recently received a favourable evaluation and we very much hope to be invited for negotiation with the EU.

We hope you enjoy the newsletter and will be triggered to visit the SeaDataNet portal (http://www.seadatanet.org) for a try out of its services.

Giuseppe Manzella - chief editor and Dick M.A. Schaap - Technical Coordinator

The SeaDataNet project (2006 - 2011) is almost coming to its end and it has achieved its objectives by developing and implementing an operational pan-European infrastructure for marine and ocean data management that is providing users and data centres a range of harmonised services, products, standards and software tools.

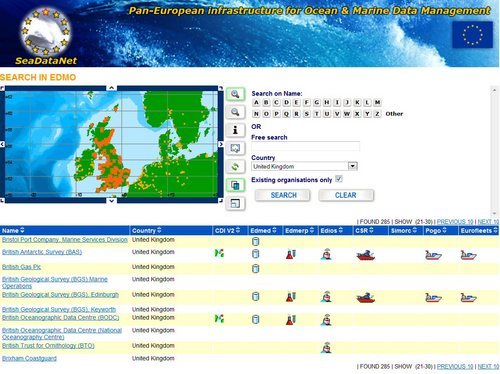

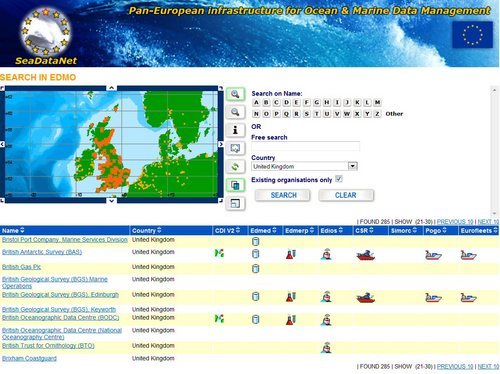

The SeaDataNet portal gives overviews of marine organisations in Europe and their engagement in marine research projects, managing large data sets, and data acquisition through research cruises and monitoring programmes and networks in European waters and the global oceans. These overviews are available for query as the following pan-European metadata services:

- EDMO: European Directory of Marine Organisations (>2200 entries)

- EDMED: European Directory of Marine Environmental Data sets (> 3000 entries)

- EDMERP: European Directory of Marine Environmental Research Projects (> 2500 entries)

- CSR: Cruise Summary Reports (> 31500 entries)

- EDIOS: European Directory of Ocean-observing Systems (> 270 programme entries for the UK alone and many underway for other European countries as part of a major updating)

At the start of the project all these directories had a different set-up and structure, but during SeaDataNet all directories have been harmonised and mutually tuned in formats, use of syntax and semantics by using the SeaDataNet common vocabularies. The directories are compiled from national contributions, collated by SeaDataNet partners for their country. This is done by using the common MIKADO XML editor that can work in manual and in automatic mode coupled to a local metadatabase. Additionally all online user interfaces of these directories have been harmonised while managed by different European task managers within SeaDataNet. From the EDMO interface it is possible to oversee and retrieve crossrelated entries in each of the other directories for each organisation.

Figure 1: EDMO results with crossrelations to other directories

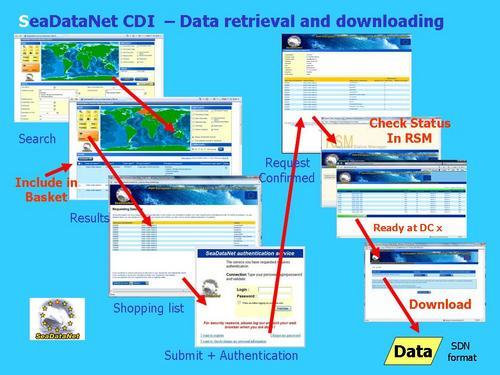

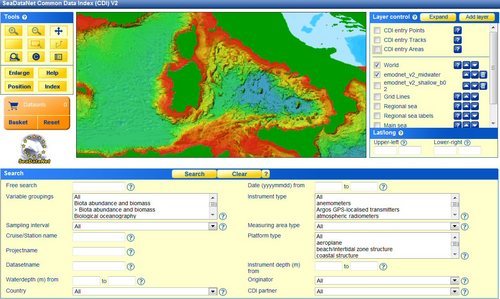

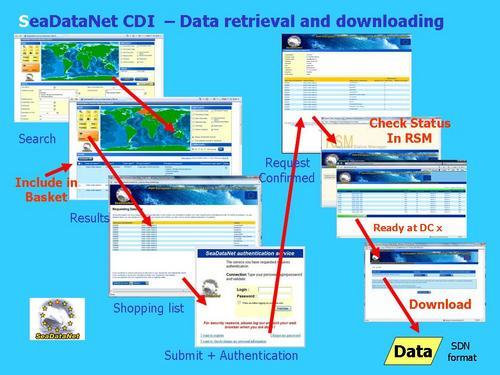

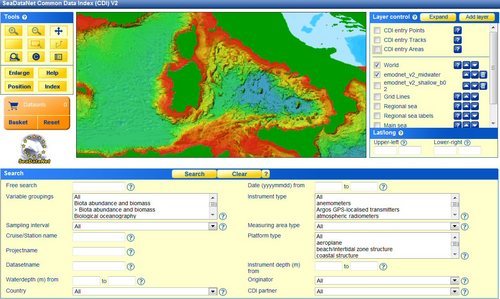

A major service at the SeaDataNet portal is the Common Data Index (CDI) Data Discovery and Access Service that provides users a unified access to the large volumes of marine and oceanographic data sets. These data sets are managed in a distributed way by the SeaDataNet data centres and other data centres that are joining the infrastructure as part of the SeaDataNet spin-off projects such as Geo-Seas, Upgrade Black Sea SCENE, CASPINFO, and various EMODNet pilots. These projects are described in more detail in other articles in this Newsletter.

For that purpose an intelligent middle tier connection is configured between the SeaDataNet portal and the local data management systems at each of the data centres. The SeaDataNet Download Manager java tool is installed locally to handle all communication with the portal by means of web services and to process requests for data by users of the portal. The CDI service gives users a highly detailed insight in the geographical coverage, and other metadata features of data across the different data centres including possible data access restrictions. The CDI format is based upon the ISO 19115 content model and it is used to describe individual field observations. This can be a sample or core but also a time series, trajectory or survey. CDI XML records are produced by applying the MIKADO XML editor, most preferably in automatic mode on a local metadata base.

Users can request access to identified datasets in a harmonised way, using a shopping basket. Users can submit a shopping request for multiple data providers in one go and can follow its processing by each of the providers via an online transaction register and can download datasets in the SeaDataNet standard formats. If OK users can download the data directly from the providers in common data exchange formats through the transaction register. There might also be situations that the user will be contacted first by the data centre to discuss and negotiate its access.

Figure 2: Data Shopping dialogue

The routing of requests is regulated by the data access restriction as indicated in each CDI record and the role as registered for each user. It is required that users register themselves in the SeaDataNet user register. This is for reasons of licensing, disclaimer, use tracking and for enabling users to follow in time the processing of their orders with possible different access restrictions and from multiple data centres. The registration request is processed by the NODC of the country of the user and thereafter the user will receive a user id, password and a role. With the user id and password shopping orders can be submitted and the user can log on to the transaction register to look up the progress of its submitted requests and to download data sets that have been made available. During registration a user agrees with the SeaDataNet Data Policy and licence that defines the rules for data centres and users.

To deliver in common data formats, data sets have been pre-processed by data centres to the agreed formats, for example using the NEMO software. As alternative, local queries can be formulated and stored to support the Download Manager to retrieve data sets from local databases and to convert these to the agreed formats on-the-fly. Downloaded data sets can be analysed and visualised using the following SeaDataNet software:

- Versatile Ocean Data View (ODV) data analysis and visualisation software package

- Sophisticated statistical DIVA tool for making comparable gridded data products at regional and global scale from merged in situ and satellite datasets

The CDI user interfaces have been further developed and finetuned during the project to give users a very versatile way to query and locate interesting data sets and to retrieve these through the shopping process. Two interfaces are provided:

This way a vast and rapidly increasing resource of marine and ocean datasets, managed by an increasing number of distributed data centres, is operationally available through the CDI service. At present it provides metadata and access to more than 850000 data sets, originating from more than 300 organisations in Europe, covering physical, geological, chemical, biological and geophysical data, and acquired in European waters and global oceans. Already more than 50 data centres from 35 countries are connected. Under influence of the related projects the present scope is being expanded to a total of 90 data centres while the number of data sets will soon go beyond the milestone of 1 million data sets.

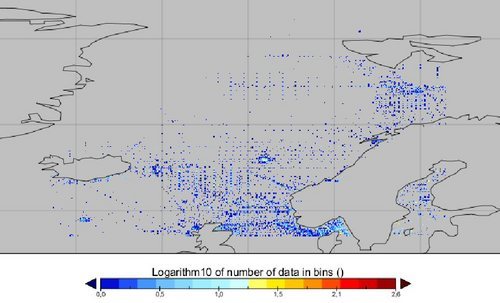

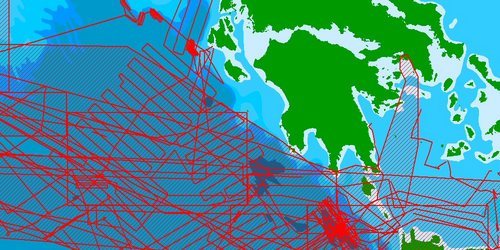

Figure 3: Overview of CDI entries per March 2011: >850000 data sets from 300+ originators and 50+ connected data centres

In the last year a number of refinements and new functionalities have been added to the CDI service to make it very friendly for users and more open for exchange with other systems:

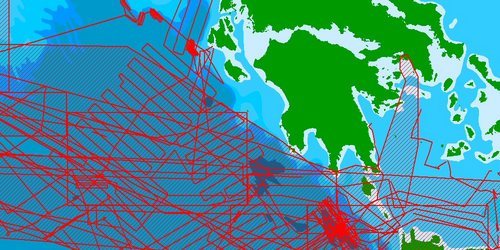

Figure 4: Zoom in on the map of the CDI user interface with hydrographic surveys

Figure 5: Inclusion of EMODNet Hydrography digital bathymetry WMS layer in the CDI user interface

- Functions have been added that a user can export its metadata results in a CSV file and that a user can store its query and its latest configuration of added GIS layers as a favourite in its browser. The URL can be forwarded to other users to repeat the same query and have the same added layers.

To exchange CDI entries from the SeaDataNet portal to other portals and systems also a number of services have been launched:

- The CDI entries in the CDI portal are also available as WMS and WFS services for other portals. For example dedicated services have been set-up and are operational for the CDI entries that are relevant for the EMODNet Chemistry, Geology, and Hydrography portals. WFS can be provided too with a selection of CDI metadata and a URL to retrieve the full CDI metadata and to get to the shopping basket mechanism for requesting access to the data sets itself

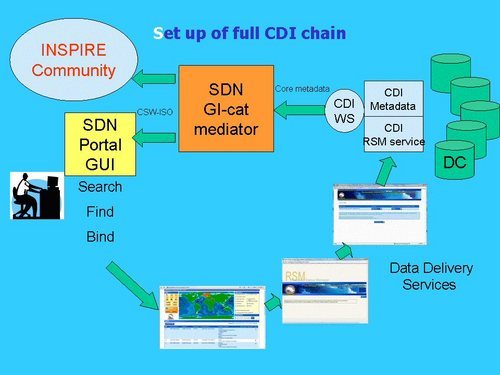

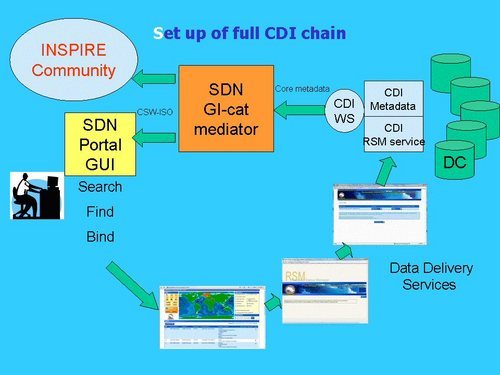

- The CDI XML exchange between the data centres and the SeaDataNet portal makes use of SeaDataNet Schema that is derived from the earlier ISO 19115 DTD. But nowadays the ISO 19139 transport Schema is mature and this has been adopted for INSPIRE. Therefore also a fully INSPIRE Compliant version of the latest CDI format has been defined and documented on the basis of the ISO 19139 standard and Schema. The resulting format has largely the same structure, but instead uses the schema and element names from ISO 19139. Furthermore on top of the SeaDataNet CDI portal a CS-W service has been configured using the GI-CAT application of the University of Firenze to deliver CDI XML in the INSPIRE compliant ISO 19139 format. The service has been configured to deliver XML files that have been aggregated by discipline and data centre to optimise the number of records that are exchanged with external services. Furthermore each aggregated file includes a dedicated URL for retrieving the full collection of CDIs at the SeaDataNet portal and to get access to the shopping process.

Figure 6: Principle of CS-W service for interoperability with INSPIRE

Finally, SeaDataNet also produces a number of regional data products. The data centers have created climatologies of several ocean properties using the data analysis software DIVA. In order to give a common viewing service to those interpolated products, the Ocean Browser web application has been developed and launched. It is based on the OGC WMS and WFS standards. This enables sharing the product maps with other WMS services and also importing WMS and WFS layers from external services such as the EMODNet portals. Data products and the Ocean Browser service are described in separate articles in this Newsletter.

SeaDataNet is already an operational infrastructure but many improvements and innovations are required to meet all requirements of user communities and data types. Therefore we hope that the EU will soon open negotiations with our consortium to implement the SeaDataNet II proposal.

The Geo-Seas project is implementing an e-infrastructure of 26 marine geological and geophysical data centres, located in 17 European maritime countries. Users are enabled to identify, locate and access pan-European, harmonised and federated marine geological and geophysical datasets and derived data products held by the data centres through a single common data portal.

Figure 1: Geo-Seas portal at http://www.geo-seas.eu

The project is implemented in the EU FP7 programme and it has a duration of 42 months from 1st May 2009 till 31st October 2012. In practice Geo-Seas is expanding the SeaDataNet infrastructure to handle marine geological and geophysical data, data products and services, creating a joint infrastructure covering both oceanographic and marine geoscientific data. This is facilitated by having 6 SeaDataNet partners also being partner in the Geo-Seas project.

Common data standards and exchange formats are agreed and implemented across the Geo-Seas data centres. For that purpose Geo-Seas is adopting the SeaDataNet infrastructure approach, architecture and components to interconnect the geological and geophysical data centres and their collections. An important activity in the project is to examine the fitness for purpose of the SeaDataNet standards such as CDI metadata format, Common Vocabularies, and data exchange formats for giving unified access to the geology and geophysics data sets. Thereby Geo-Seas also takes into account the experience and developments arising from international geological projects, such as OneGeology and GeoSciML. Many of the Geo-Seas partners are also partners in these international projects.

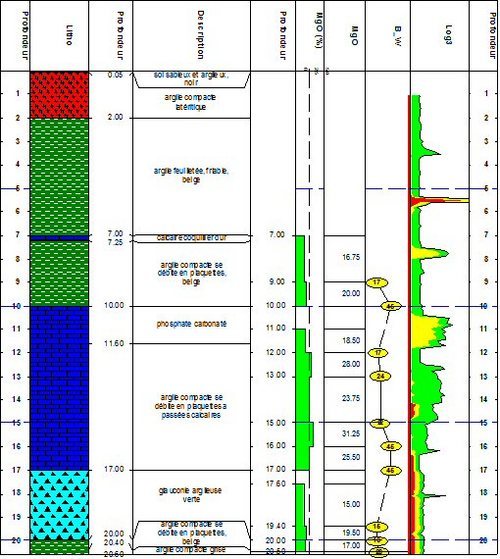

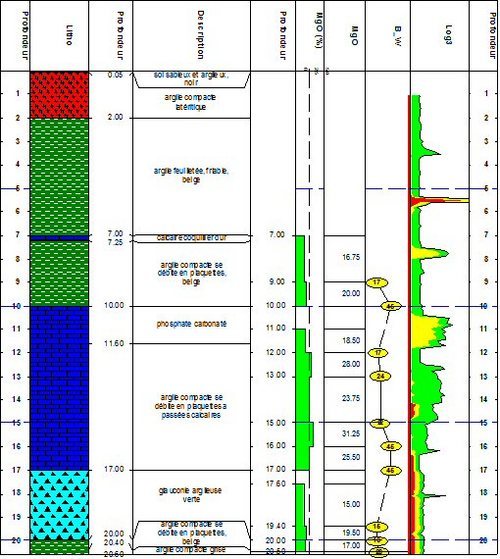

This activity has already resulted in an upgrading of the CDI format to handle also tracks and polygons in a detailed way using GML and including an option for additional service bindings in the CDI format that enables linking (pre)viewing services. Additionally the common vocabularies have been expanded considerably in number of terms but also by adopting GeoSciML vocabularies, for example for classification of sediment types. Furthermore a dedicated guideline was prepared how to describe the results from geological analyses on a seabed sample or core in the SeaDataNet ODV format.

In order to accommodate the upgrade to the CDI the SeaDataNet MIKADO tool used by the data centres to create their metadata and the SeaDataNet CDI Data Discovery and Access Service used by portal users to find and retrieve data sets have also been upgraded. This included upgrading the associated import, validation and storage applications.

Because Geo-Seas is making use of the tools and underlying infrastructure of SeaDataNet these upgrades have been undertaken by Geo-Seas partners in cooperation with the SeaDataNet Technical Task Team. The upgrading has resulted in enriching the SeaDataNet standards and tools and these are now implemented for all projects and portals related to SeaDataNet such as Geo-Seas, Upgrade Black Sea SCENE, CaspInfo, and EMODnet pilots.

The Geo-Seas data centres are now well underway in establishing their connection to the infrastructure by installing and configuring the SeaDataNet Download Manager software and in preparing CDI metadata entries and data files in the agreed common formats by mapping and converting their local metadata, vocabularies and data using the SeaDataNet MIKADO and NEMO tools. This is making an increasing volume of marine geological and geophysical metadata and data available to users through the dedicated Geo-Seas portal and the overall SeaDataNet portal. This concerns seabed samples and cores, bathymetric, magnetic, and seismic surveys. The locations and metadata will also be made available as part of the CDI WMS and WFS services by which these can be added as extra layer and information to the EMODnet Geology portal (see http://www.emodnet-geology.eu) that is developing a seamless seabed surface map of 1:1 million for the European seas.

Another interesting activity in Geo-Seas with wider potential is to expand the Geo-Seas services with a number of both standard and prototype data products and viewing services. An active dialogue and consultation has been undertaken with potential user communities, such as marine researchers, environmental agencies, offshore industry, and oceanographic and hydrographic organisations to retrieve their user requirements and ideas for a number of standard data products and services. For feasible standard products, algorithms and production prototypes will be developed using present technologies, such as 2-D mapping and viewing tools. Also a number of more complex data products will be explored, such as Digital Terrain Modelling (3-D), by developing and testing prototypes, and taking into account experiences and software packages from other international projects, such as OneGeology and GeoSciML. This will include development and application of OGC WMS and WFS services that can be shared on a wider basis with other portals, such as the EMODnet portals.

Figure 2: Log of submarine borehole (source: BRGM)

Portal: http://www.geo-seas.eu/

The European Commission has concluded service contracts for creating pilot components of the European Marine Observation and Data Network (EMODnet). The overall objective is to create pilot portals and to migrate fragmented and inaccessible marine data into interoperable, continuous and publicly available data streams for complete maritime basins. The results will help to define processes, best technology and approximate costs of a final operational European Marine Observation and Data Network.

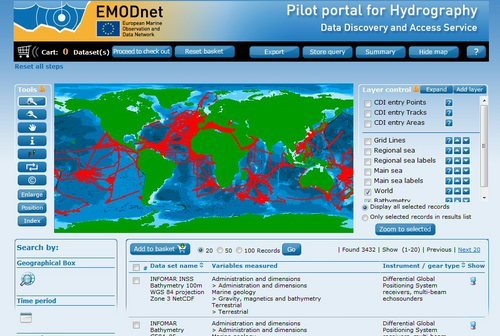

The EMODnet-Hydrography portal is one of the portals, that is being developed. The portal has been launched in June 2009 and is providing an increasing level of services and products. Very good progress is being made in compiling an inventory of available bathymetric surveys (plummets, single and multibeam surveys) that is provided as a Discovery and Data Access service, adopting the SeaDataNet Common Data Index (CDI) infrastructure. Moreover survey data are gathered and processed as input for producing a higher resolution digital bathymetry for the following sea regions in Europe:

-

the Greater North Sea, including the Kattegat and stretches of water such as Fair Isle, Cromarty, Forth, Forties,Dover, Wight, and Portland

- the English Channel and Celtic Seas

- Western Mediterranean, the Ionian Sea and the Central Mediterranean Sea

- Iberian Coast and Bay of Biscay (Atlantic Ocean)

- Adriatic Sea (Mediterranean)

- Aegean - Levantine Sea (Mediterranean).

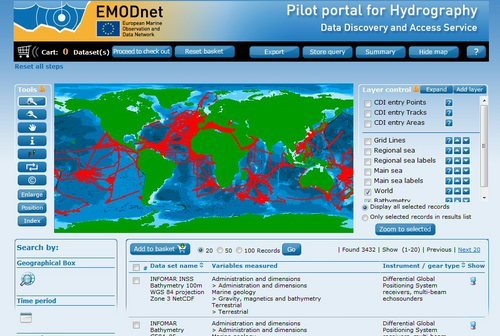

Figure 1: the CDI Data Discovery and Access Service for querying and requesting access to bathymetric surveys that are managed by distributed data providers

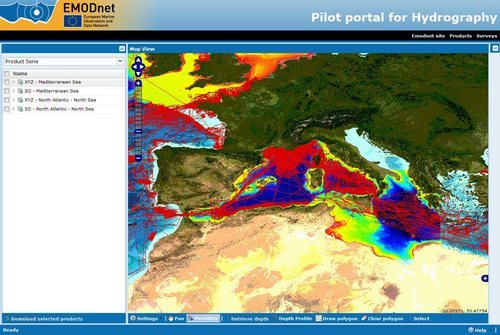

Through a dedicated Data Products viewing service users have public access to the following geographical information system layers:

- water depth in gridded form on a DTM grid of a quarter a minute of longitude and latitude

- water depth in vector form with isobaths

- option to view QC parameters of individual DTM cells and references to source data

- option to view depth profiles along tracklines

- coastlines

- option to add layers through OGC WMS protocol, such as the tracks of bathymetric surveys as included in the CDI Discovery service

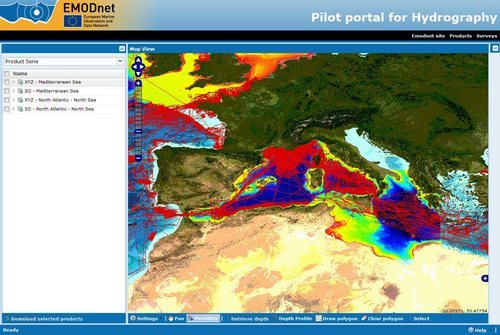

Figure 2: the Data Products Viewing Service zooming in on the Central Mediterranean Sea with EMODNet digital bathymetry and WMS overlay of CDI metadata of original surveys and WMS GEBCO bathymetry as background layer

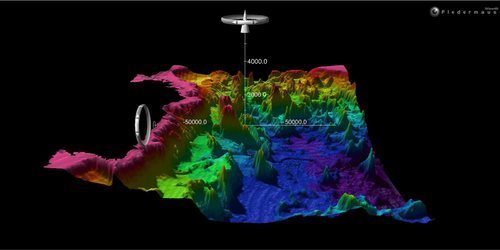

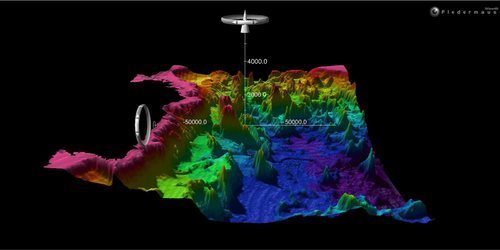

Users can download the digital bathymetry as DTM tiles in xyz and a number of other formats including the Fledermaus SD format. This is fit for viewing the bathymetry in 3D with the free iView4D Fledermaus viewer. Additionally users can retrieve the metadata of original surveys and can submit requests for access of these data sets to their distributed data managers. The OGC WMS support also enables users to use the digital bathymetry in combination with data products from other portals including the other portals developed as part of the EMODnet preparatory actions for marine biology, marine chemistry, marine geology, physics and marine habitats.

Figure 3: Fledermaus 3D view of downloaded EMODNet digital bathymetry of the Tyrrhenian Sea near Corsica and Italian west coast.

Various organisations are engaged in the acquisition and provision of hydrographic data and data products. These comprise:

- Hydrographic Offices, that are responsible for surveying the navigation routes, fairways and harbour approach channels and producing from these the nautical charts on paper and as Electronic Nautical Charts (ENC), that are used for navigation.

- Authorities, responsible for management and maintenance of harbours, coastal defences, shipping channels and waterways. These authorities operate or contract regular bathymetric monitoring surveys to assure that an agreed nautical depth is maintained or to secure the state of the coastal defences.

- Research institutes, that collect multibeam surveys as part of their scientific cruises.

- Industry, especially the energy industry, that contracts multibeam surveys for pipeline and cable routes (in case of windfarms) and the telecommunication industry for phone and internet cable routes.

The EMODnet Hydrography and Seabed mapping partnership is actively seeking cooperation from these organisations for additional data sets (single and multibeam surveys, sounding tracks, composite products) to support a good geographical coverage and high quality of the hydrographic data products. The received data sets are used for producing regional Digital Terrain Models (DTM) with specific resolution (0.25 minute * 0.25 minute) for each geographical region. The data sets themselves are not distributed but described in the CDI metadata, giving clear information about the background survey data used for the DTM, their access restrictions, originators and distributors and facilitating requests by users to originators. This way the portal provides originators of hydrographic data sets an attractive shop window for promoting their data sets to potential users, without losing control. The CDI metadata are also included in the overall SeaDataNet CDI portal that is powering the CDI interface in the EMODNet Hydrography portal.

Portal: http://www.emodnet-hydrography.eu

The EMODnet Chemical pilot is undertaken by 25 partners of SeaDataNet, selected on their geographical coverage and specific expertise. It deals with the Greater North Sea and the Black Sea region. Additionally five spots from the Mediterranean (Balearic Sea, Gulf of Lion, North Adriatic Sea, Gulf of Athens and NE Levantine basin) have been included.

The project is focused on the groups of chemicals required for monitoring the new EU Marine Strategy Framework Directive (MSFD):

1. synthetic compounds (i.e. pesticides, anti foulants, pharmaceuticals)

2. heavy metals

3. radio nuclides

4. fertilisers and other nitrogen- and phosphorus-rich substances

5. organic matter (e.g. from sewers or mariculture)

6. hydrocarbons including oil pollution.

The implementation of this Directive will require member states in the coming years to report on the state of the seas and oceans in an integrated pan-European manner. Therefore the European Environment Agency is planning WISE-Marine, the marine component of the EEA's Shared Environmental Information System (SEIS). WISE-Marine is intended to inform the European public on indicators for Good Environmental Status of sea basins.

The recent Marine Knowledge 2020 communication from the EU strives for a situation whereby WISE Marine can harvest data and data products from EMODNet and whereby the EEA will encourage member states to participate actively in the EMODNet developments for making their environmental data available.

Therefore the EMODNet Chemistry project can be considered as a challenging pilot to explore how the SeaDataNet infrastructure can be adopted for compiling an as complete inventory of existing chemistry data sets, seeking cooperation with monitoring agencies and research institutes, gathering access to data sets itself, and preparing station trends and data products that can support basin environmental assessments.

The project has made good progress since the start in June 2009. It has contributed to a considerable expansion of the SeaDataNet vocabularies for naming chemical parameters in water, sediment and biota. Also a large volume of data sets has been compiled so far that are included in the CDI Data Discovery and Access Service, while the actual data sets have been gathered in 3 regional project databases for analyses and production of common products.

For the North Sea an up-to-date and extensive data collection has been compiled by involving the enviromental monitoring agencies of the North Sea countries complementing these with data from research institutes and cruises. For the Black Sea region it takes more effort to achieve such an up-to-date and complete collection; however through synergy with the Upgrade Black Sea SCENE project and direct involvement of the Black Sea Commission Secretariate the gathering is now making very good progress. This is important because it will also reveal where there are gaps in the monitoring which might need to be filled in a later stage for the MSFD implementation.

The EMODNet Chemistry group works together with representatives of the regional conventions (OSPAR, HELCOM, Black Sea Commission, and UNEP/MAP - MedPol) and EEA to achieve cooperation of environmental monitoring authorities in the countries around the target sea areas. Moreover experts from these international parties and ICES are engaged in the analysis and quality control processes with the aim to establish harmonised and useful products. Therefore in September 2010 a Workshop took place with many experts to discuss what kind of products could be produced and the way to validate products.

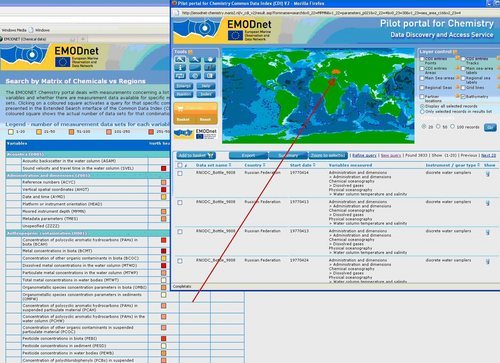

The EMODNet Chemistry pilot not only drives a further expansion of the volume and types of data handled in the SeaDataNet CDI service but it also leads to a number of service innovations.

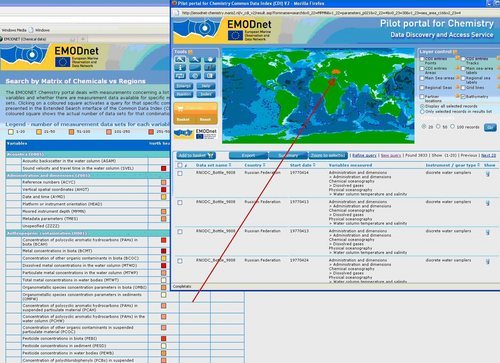

The EMODNet Chemistry portal provides the usual CDI user interfaces service and a new dedicated version called "CDI matrix Variables VS Regions". This matrix shows in a clear way the data availability in the 3 regions of interest per each parameter considered. The matrix is linked directly to the CDI discovery service. Colours indicate the number of measurements. Clicking on a coloured square activates a query for that specific combination of variable and marine region, whereby the results are presented in the user interface of the Common Data Index (CDI) Data Discovery and Access Service. Only pointing the mouse on a coloured square shows the actual number of data sets for that combination.

Figure 1: New CDI discovery service "matrix Variables VS Regions".

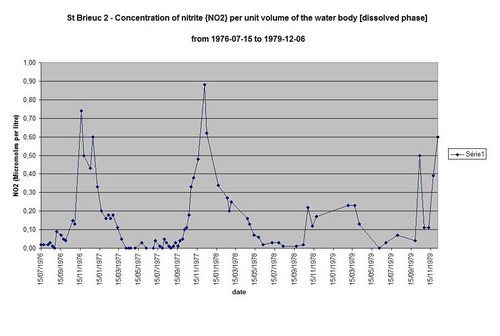

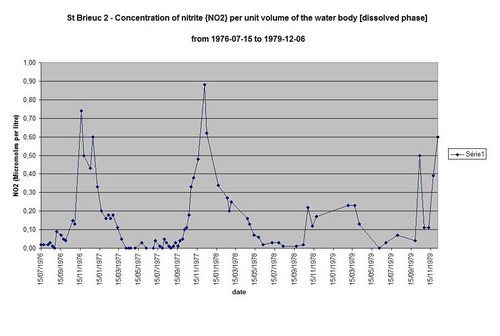

The chemistry data products are made available through the SeaDataNet Ocean Browser visualisation service. A test was set up for exploring a possible technical solution to visualise "time series data". An interim solution is to provide preprocessed time series plots (using ODV software) and then to visualise these by the Ocean Browser viewing service which is illustrated below. This is a temporary solution while a structural solution with on-the-fly visualisation of distributed data sets is foreseen in the SeDataNet II project.

Figure 2:Ocean Browser "time series mode"

Figure 3: Time series Plots linked to Ocean Browser viewing service

Portal: http://www.emodnet-chemistry.eu

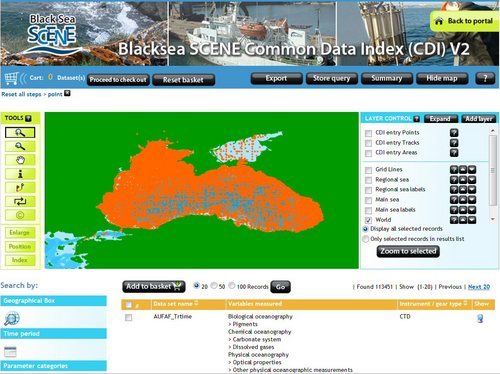

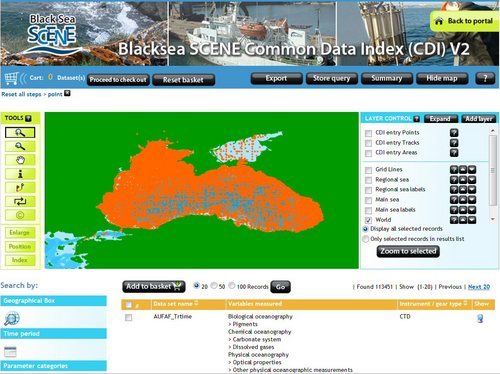

The Upgrade Black Sea SCENE (UBSS) project is an FP7 project running from 2009 until the end of 2011. It aims at building marine data management capacity in the Black Sea countries by establishing national NODC networks that are integrated in the SeaDataNet infrastructure. Training is given in the SeaDataNet tools, standards and data quality control methods. And Black Sea data quality control workshops with all partner institutes are organised to validate the overall quality of their data sets and to harmonise data sets.

The partnership comprises 51 partners which includes 41 local data centres from the 6 Black Sea countries. These include the 6 NODCs that are also partner in SeaDataNet. The 41 data centres are populating the SeaDataNet directories EDMERP, EDMED, CSR, EDIOS and EDMO with local contributions. Moreover the 41 data centres are adopting the SeaDataNet standards and tools to connect to the SeaDataNet infrastructure and to participate in the CDI Data Discovery and Access Service to provide unified access to the Black Sea data sets managed by the 41 data centres.

The first year of the project has focused on getting acquainted with SeaDataNet data management practices, relevant EU Directives, and populating the SeaDataNet meta directories. Last year all focus has been directed towards populating the CDI service and getting connected. This has resulted in a present situation that more than 60% of the 41 data centres are operationally connected while the remaining centres are making good progress towards achieving their connection soon. The NODCs have a guiding role for the institutes in their country giving support for preparing CDI entries, converting data sets to SeaDataNet data exchange formats and configuring the Download Manager connection.

Figure 1: UBSS CDI interface with gathered CDI entries

Meanwhile also the volume of covered data sets is increasing well whereby in the last months a special focus has been directed towards gathering entries for marine environmental monitoring data sets that are of importance for the EMODNet Chemistry project. The list of EMODNet relevant data sets included in the CDI service has recently been validated for completeness by the Black Sea Commission Secreatariate, a partner in the project, and following their observations specific data centres are encouraged to bring in additional data sets.

In April 2011 dedicated DQC Workshops are planned for Black Sea chemistry and marine biology. The chemistry Workshop will be joined by EMODNet Chemistry partners to consider the present data collection and to contribute to the validation of regional chemistry data products for the Black Sea.

Black Sea Outlook Conference - 31 October - 4 November 2011

The Black Sea Commission and the UBSS project are organising a joint conference. This third Black Sea Commission (BSC) Scientific & Final UBSS Project Conference will take place in Odessa, Ukraine on 31st October - 4th November 2011. The short name of the Conference is 'Black Sea Outlook', the long name reveals the main objective of the event: Let us work together towards better protection of the Black Sea. More information can be found in the official announcement that can be found at the UBSS portal. Interested persons are kindly invited to consider registration and submission of Abstracts as soon as possible but not later than 1st of June 2011.

Portal: http://www.blackseascene.net

SeaDataNet provides an integrated and harmonised overview and access to data resources, managed by distributed data centres. Moreover it provides users common means for analysing and presenting data and data products. Therefore SeaDataNet has designed and implemented an overall system architecture, common vocabularies and common software tools for data centres and users. These common vocabularies and software tools are freely available via the SeaDataNet portal in the Standards & Software section.

For Data Centres it concerns:

- Common Vocabularies web service and client interface for standard mark-up of metadata

- MIKADO XML editor for editing and generating SeaDataNet XML metadata entries

- NEMO for conversion of any ASCII format to the SeaDataNet ODV4 ASCII format

- Med2MedSDN for conversion of the Medatlas format to the SeaDataNet Medatlas format

- Download Manager for connecting systems of Data Centres to the SeaDataNet portal for data access

- XML Validation web service to validate new XML entries.

For end users it concerns:

- Ocean Data View (ODV) software package for analysing and visualising of downloaded data sets

- DIVA software package and service for interpolation and variational analysis of data sets

The Common Vocabularies are regularly expanding with many new terms under influence of the various SeaDataNet related projects and additional functionality has been added to the various software tools. The following articles give an illustration.

SeaDataNet maintains and provides a number of metadata services: cruise summary reports (CSR), marine environmental data sets (EDMED), marine environmental research projects (EDMERP), and marine monitoring programmes and stations (EDIOS). Furthermore it gives overview and access to data sets in standard formats through the common data index (CDI) service. The metadata formats are based upon the ISO 19115 standard for geographic data and information.

Also common vocabularies have been set up to make sure that all partners share the same standards for meta-data exchange. The project has also defined common formats for data exchange and distribution which are NetCDF, ODV (Ocean Data View) both mandatory, and MEDATLAS optional.

The software tools NEMO and MIKADO have been developed and are maintained to support data providers to generate standard metadata entries and standard data files.

NEMO

NEMO is a reformatting software used for data exchange from SeaDataNet data centres and portal to users. Its objective is to reformat ASCII files of vertical profiles (like CTD, Bottle, XBT) or time-series (like current meters, sea level data) or trajectories (like thermosalinograph data) to a SeaDataNet ASCII format (ODV or MEDATLAS).

As the entry file can be all kinds of ASCII format, NEMO must be able to read all these formats to translate them to ODV or MEDATLAS. To do so, the principle is that the user of NEMO describes the input files formats so that NEMO is able to find the information which is necessary to generate the files at SeaDataNet formats. One very important pre-requirement is that in the entry files the information about the stations must be located at the same position: same line in the file, same position on the line or same column if Comma Separated Value (CSV) format. Furthermore, station information must be at the same format in all the stations.

To convert the input files, the user has to proceed into 5 steps:

- Describe the type of file and the type of measurements: one file for one cruise, or one directory with n files for one cruise, or n directories for n cruises or n files not related to cruises, file with separators, vertical profiles, time-series, trajectories.

- Describe the cruise, only if the files are related to one cruise and only if MEDATLAS is the output format.

- Describe the station information: all metadata available on the station in the input file can be described to be kept in the output file, some information are mandatory like data type, date, time, latitude, longitude.

- Describe the measured parameters: all measured parameters which need to be kept in the output file must be described; description includes location in input file, format in output file (number of decimal values) and default value in input file and in output file; a formula can be applied on the value of the parameter in the input file, this can be useful for unit conversion, for example.

- Convert the input file(s): choose either the MEDATLAS or ODV format for the file conversion

MIKADO

MIKADO is a ISO-19115 XML catalogue description generator used to create XML files for metadata exchange of CSR, EDMED, CDI, EDMERP and EDIOS. MIKADO can be used into 2 different ways:

- In manual mode, to input manually information for catalogues in order to generate XML files. Manual input is well adapted if there is a small amount of EDMED, CSR, CDI, EDIOS or EDMERP entries. This manual mode creates XML files one by one and does not require specific database knowledge. The MIKADO manual interface has the same design and behaves in the same way for all the catalogues defined in SeaDataNet. This interface is very intuitive and easy to use: it is composed of user-friendly forms, divided into thematic tabs that the user has to fill in

- In automatic mode, to generate these descriptions automatically if information is catalogued in a relational database or in an Excel file. The user-friendly interface of MIKADO helps the user to define his own configuration, in two steps:

1. definition of connection parameters to access the user local database: these parameters (driver class name, JDBC connect URL, username/password if necessary) are pre-filled by MIKADO for all database management systems;

2. mapping between the user’s database and the XML format: this mapping is performed by SQL queries which extract information from the database. Here knowledge on database management and SQL language is required, but MIKADO provides pre-formatted queries to help the user. He/she only has to complete queries and to associate the content of the database with the XML fields which are clearly entitled.

NEMO and MIKADO interactions

NEMO was designed to be linked to MIKADO through the generation of a text file that can be converted to Excel. The principle (Figure 1) is that while NEMO converts a collection of files (like for example a collection of XBT files in a specific geographical area), it also generates a “ CDI summary CSV file” which contains all the minimum information necessary to create CDI records for population of the SeaDataNet catalogue. This CSV file, once converted to EXCEL, can be read by MIKADO (automatic generation) using a JDBC driver for EXCEL and then XML CDI files are generated and can be directly exported to the central SeaDataNet catalogue.

Figure 1: From « raw » data files to SeaDataNet CDI catalogue

These two tools are written in Java Language which means that they are portable under multiple environments: Windows (2000, XP, VISTA), Apple, Unix (Solaris) and Linux. They both use the SeaDataNet common vocabularies web services to insure consistency of generated metadata and data whatever the data centre which have processed them.

NEMO and MIKADO tools make a powerful multi-platforms package useful for harmonised and coherent marine data management. These two software tools and the SeaDataNet Common Vocabularies are available free of charge for any user from the SeaDataNet portal (http://www.seadatanet.org) in the Standards and Software section.

In conjunction with the Ocean Data View (ODV) software for data quality control, analysis and presentation these tools provide a platform sufficient to perform all needed operations in an oceanographic data centre: generate metadata, harmonize and qualify data, prepare data distribution. This package allows setting up an oceanographic data centre compatible with most standards in use in the international marine community.

The Dark Ages

When the SeaDataNet project started in 2006 controlled vocabularies existed within the European oceanographic data management community, but they could not be described as well managed. SeaDataNet's precursor, Sea-Search, populated metadata fields from a set of ‘libraries'. Content governance of these was delegated to individuals, leading to a lack of response to requests for change and errors resulting from the limited available intellectual resource. Their technical governance comprised Excel spreadsheets posted on web sites around the community.

The result of this governance weakness was the development of multiple local copies. Like Darwin's Galapagos Finches, these evolved into entities that were broadly the same but differed in various small, but nonetheless significant, details. Interoperability falls flat if a code is known to a document creator, but not to its recipient.

SeaDataNet Developments

As soon as SeaDataNet started, vocabulary content governance was established in the form of the Technical Task Team. Available expertise was augmented and the governance given a more permanent footing through the establishment of the SeaVoX vocabulary governance group under the joint auspices of the IODE MarineXML Steering Group. An ICES-led governance group developed to manage platform instance vocabulary metadata that evolved out of the ICES Ship Codes. When Geo-Seas joined the SeaDataNet ‘family' geological expertise was brought into the governance process through the formation of a discussion group from the Geo-Seas partnership.

Technical governance was provided through SeaDataNet's adoption and further development of the NERC Vocabulary Server technology initially developed by the NERC DataGrid project. This provides a clearly defined, readily available master copy of all vocabularies with formal versioning. Every vocabulary and every term within a vocabulary is represented by a URI that resolves to a SKOS XML document which delivers labels, definitions and mappings to other related terms. Clients have been developed to present these documents in a format more amenable to humans such as the SeaDataNet Parameter Thesaurus Browser (/v_bodc_vocab/vocabrelations.aspx?list=P081).

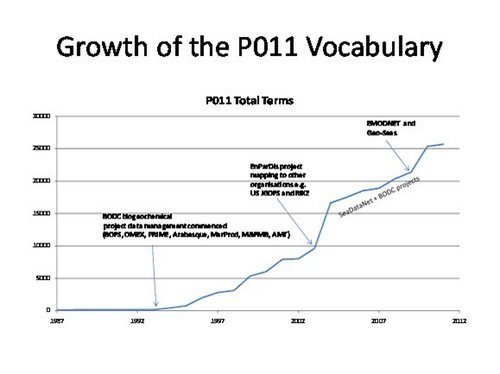

During SeaDataNet there has been a vocabulary population explosion both through terms added to existing vocabularies and through the creation of additional vocabularies to populate metadata fields formerly populated by plaintext. There are now close to 100 vocabularies deemed to be of interest to SeaDataNet and Geo-Seas, which are used for populating metadata fields in EDMED, CSR, EDIOS and CDI documents and the semantically-controlled labelling of parameters in data files.

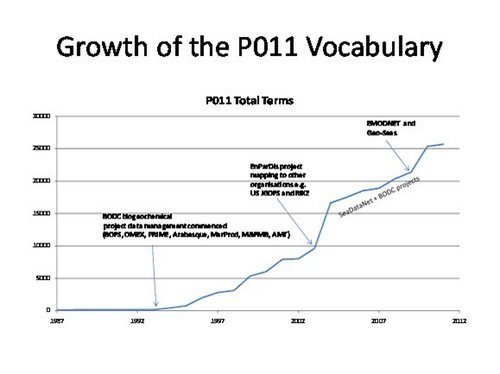

The influence of SeaDataNet and users of its standards (EMODNet and Geo-Seas) may be seen from the growth of the BODC Parameter Usage Vocabulary (P011) used to label data. Together these projects have accounted for approximately 75% of the growth since 2006 and keeping up with their requirements is a full-time job.

Figure 1: P011 Vocabulary growth

Future Developments

Further development of the NERC Vocabulary Server (NVS) forms the bulk of one of the work packages of the EU FP7 NETMAR project (http://netmar.nersc.no/). This will result in delivery of a new version of the server by the end of 2011. The new version will provide additional functionality with cross-cutting thesauri built from multiple existing vocabularies and served according to the latest W3C SKOS standard. The API will be improved and augmented by a truly RESTful interface supporting read and secured write through HTTP method calls. Mappings to URIs outside the NVS namespace (e.g. to the EEA GEMET Thesaurus) and vocabulary term labelling in multiple languages will also be possible. Of course, existing URIs will be preserved and the current server will be maintained until usage logging tells us that it is no longer being used.

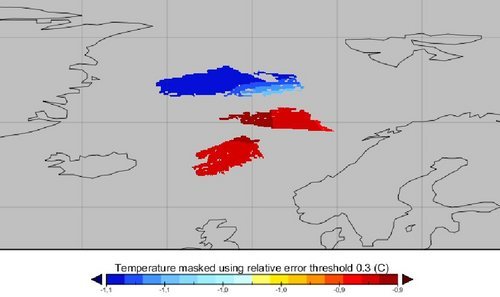

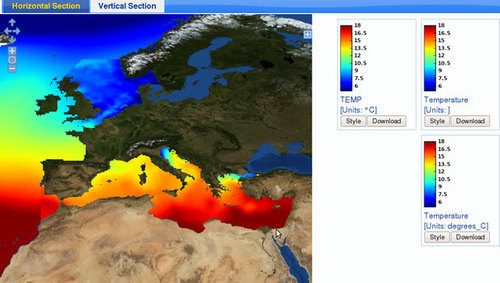

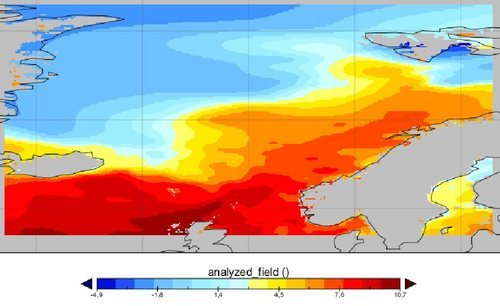

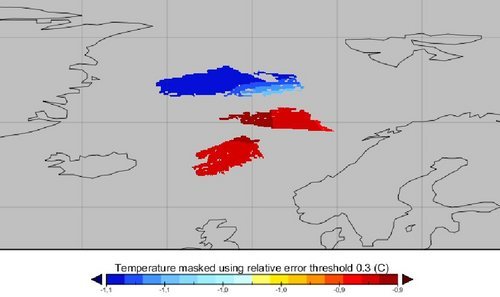

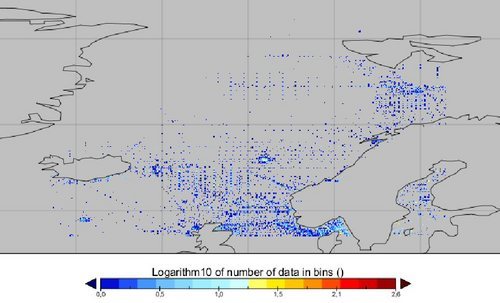

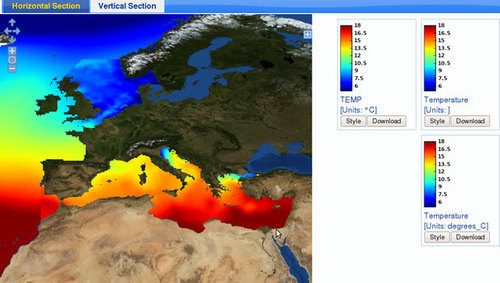

Within the SeaDataNet and EMODNET (Chemical lot) projects, several national ocean data centers have created gridded climatologies of different ocean properties using the data analysis software DIVA [1]. In order to give a common viewing service to those interpolated products, the GHER has developed OceanBrowser [2,3] which is based on open standards from the Open Geospatial Consortium (OGC), in particular Web Map Service (WMS) and Web Feature Service (WFS). These standards define a protocol for describing, requesting and querying two-dimensional maps at a given depth and time. The software OceanBrowser is composed by a client and server part. The server essentially supports the standardized requests for listing all available layers and format and projection in which they are available, provides a rendered picture of the required layer, and provides additional information of a given location (often the value of the field).

The server is built entirely upon open source software. It is written in python using Matplotlib and basemap for graphical output. It is embedded in Apache and runs on a dedicated Linux server (two Intel Xeon E5420 quad-cores) at the University of Liege.

The client part runs in a web browser and uses the JavaScript library OpenLayers to display the layers from the server. A simplified version of OceanBrowser is also used in Diva-on-web, an on-line tool to create interpolated fields from in situ ocean data [4,5].

Current features

OceanBrowser currently supports the following operations:

- Horizontal sections of the 4-dimensional fields (longitude, latitude, depth and time) can be visualized at a selected depth and time. The climatological fields can also be interpolated and visualized on arbitrary vertical sections (Figure 1).

- The maps displayed in the browser are created dynamically and therefore several options are made available to the user to customize the graphical rending of those layers. Layers can be displayed either using interpolated shading, filled contours or simple contours and several options controlling the color-map are also available.

- The horizontal and vertical sections can be animated in order to study the evolution in time.

- Image can be saved in raster format (PNG) and vector image formats (SVG, EPS, PDF). It can also be saved as a KML file so that the current layer can be visualized in programs like Google Earth and it can be combined with other information imported in such programs.

- The underlying 4-dimensional NetCDF file can be either downloaded as a whole from the interface or only as a subset using the linked OPeNDAP server based on pydap.

- The web interface can also import third-party layers by using standard WMS requests. The user needs only to specify the URL of the WMS server and its supported version.

- Several data sets can be visualized at the same time. For example, ocean temperature from the North Sea, Atlantic Ocean and Mediterranean Sea can be visualized at the same time. The consistency of the several ocean products in adjacent regions can be easily assessed and potential problems can be highlighted (Figure 1). This feature is available for horizontal as well for as for vertical sections. By choosing simple contours of one field in combination with filled contours of other field one visually sees the correspondence or location of frontal structures.

Future outlook

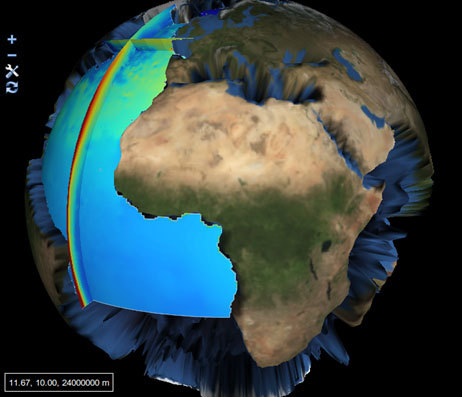

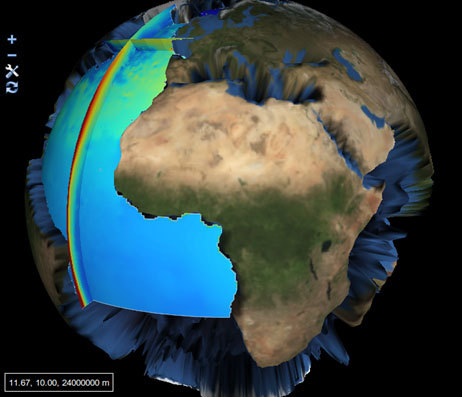

The upcoming HTML5 standard brings a large range of new features to modern web browsers which will also be useful to visualize ocean data. WebGL is a technology which allows displaying 3 dimensional data in a web browser using hardware acceleration. An experimental JavaScript library, called EarthGL (figure 2), has been developed which allows displaying horizontal and vertical sections of ocean fields on a globe with realistic topography [6]. This JavaScript library is strongly inspired by OpenLayers and should be familiar to developers using the library. WebGL is currently supported by Google Chrome (version 9) and the development version of Mozilla Firefox and Safari.

References

- Brasseur, P., Beckers, J.-M., Brankart, J.-M., Schoenauen, R., 1996. Seasonal temperature and salinity fieldsin the Mediterranean Sea: Climatological analyses of an historical data set. Deep–Sea Research 43 (2),159–192.

- OceanBrowser interface for SeaDataNet http://gher-diva.phys.ulg.ac.be/web-vis

- OceanBrowser interface for EMODNET (Chemical lot) http://gher-diva.phys.ulg.ac.be/emodnet

- Diva-on-web interface http://gher-diva.phys.ulg.ac.be/web-vis/diva.html

- A. Barth, A. Alvera-Azcárate, C. Troupin, M. Ouberdous, and J.-M. Beckers. A web interface for griding arbitrarily distributed in situ data based on Data-Interpolating Variational Analysis (Diva). Advances in Geosciences, 28:29–37, 2010. doi: 10.5194/adgeo-28-29-2010. URL http://www.adv-geosci.net/28/29/2010/.

- EarthGL, http://gher-diva.phys.ulg.ac.be/EarthGL/doc/

Figures

Figure 1: Screen shot of OceanBrowser

Figure 2: Experimental EarthGL library based on WebGL showing a horizontal section at 5000 m temperature and two vertical sections of Atlantic Temperature.

The last year has seen four releases of the Ocean Data View software, each one providing numerous new features and consolidating or facilitating the use of existing ones. Two important developments – (1) the re-design of the SDN importer, and (2) the implementation of directional constraints in the DIVA implementation of ODV – are described below. The latest version of the ODV software can be obtained at http://odv.awi.de.

The new SDN importer

As the result of a data query, SDN delivers to the user a number of .zip files, one from every data center responding to the query. These .zip files contain a potentially large number of individual data files, each one holding the data of a single profile, time-series or trajectory. The type of data (profiles, time-series, and trajectories) and the set of variables in the individual data files may differ greatly, thereby precluding single-step ODV spreadsheet import.

The Import > SDN Spreadsheet option addresses these problems and allows the import of many SDN .zip packages in a single operation. Once all import files have been selected, ODV automatically unzips the packages and analyzes the contents of all individual data files. This includes format compliance checks (non-compliant files are excluded), and the grouping of files according to data type. ODV then creates separate data collections for every encountered data type and imports the data of the matching files into the particular collections. The newly created collections may be opened via the File > Recent Files option once the SDN import finishes.

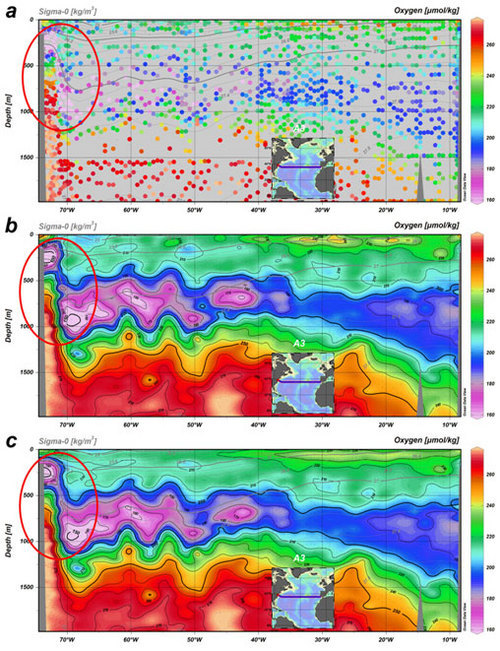

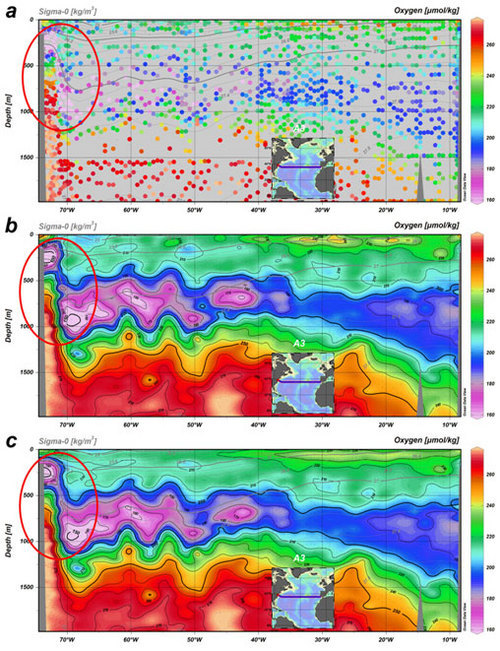

DIVA directional constraints

In section windows, DIVA can apply special directional constraints that tend to align the field along isopycnals, even if the isopycnals are tilted. This behavior is desirable because spreading and mixing of properties along isopycnals are most intense (require the least work), and small property gradients along isopycnals are expected. This DIVA feature is now accessible in the ODV DIVA integration once a potential density derived variable has been defined and the Strength value on the DIVA Settings page of the section’s Properties dialog has been set to a non-zero value.

The effect of the directional constraints is highlighted in Figure xxx showing the upper layer oxygen distribution across the Gulf Stream front. As can be seen in the original data plot xxxa there is an oxygen minimum layer on the western side of the section roughly following the s0=27 isopycnal. This isopycnal lies at about 250 m depth near the coast, but subducts to about 750 m, just a few 100 kilometers offshore. Maintaining the oxygen minimum as a continuous feature is a challenge for any gridding algorithm, especially in the sloping region, where O2 values east and west of the feature are much higher. The influence of the neighboring high values leads to an erosion of the minimum, which can be seen in the DIVA gridding plot xxxb. Using DIVA gridding with the direction constraint switched on (strength value 500) shows an enhanced alignment of the gridded field with the isopycnals and an improved representation of the oxygen field in the sloping region. Choosing a larger strength value would improve this further.

Figure xxx: The upper layer oxygen distribution across the Gulf Stream front in the subtropical North Atlantic shown as (a) original data points, (b) DIVA gridded field without directional constraints, and (c) DIVA gridded field with directional constraints (strength value 500).

SeaDataNet is also providing added-value regional data products next to metadata and data. These data products concern gridded climatologies based on available data-sets and are produced by dedicated regional research groups within the SeaDataNet consortium. This article describes the products and related activities for the Atlantic and Global Ocean.

Datasets Used and Regional Areas

For the Atlantic Ocean climatology available data were downloaded from the SeaDataNet CDI portal, and the Coriolis and Sismer databases. Additional data sets were retrieved directly from IEO (Spain), BODC (United Kingdom), MHI (Ukraine), and MI (Ireland). The depth layers investigated are defined to follow IODE levels.

Special work has been done on a specific regional area, the Eastern North Atlantic (ENA). Over the past years a number of ocean climatologies have been constructed for this area (ENA). Their applications have ranged from initial and boundary conditions of ocean models to ARGO profile data validation and quality assurance, including time-series analysis. Traditionally, most applications are based on NODC's WOD (Levitus WOA). This database was compiled from a number of international data centers, including the WOD. The data for this ENA compilation (IEO contribution) are provided by both international data centers and national data centers involved in SeaDataNet.

For the chemical database (V2), it was more difficult to compile data to cover the whole target region. Data collected from exchanges with others partners are mostly limited to hydrological data sets. Therefore it was decided to develop V2 products only for specific areas. For the global product, data are exclusively from the Coriolis database and only concern hydrological parameters.

Quality Assurance Assessment

The quality control system is already used in the framework of the Coriolis project and is improved over time. Some complementary methods such as objective analysis done in real time and delayed mode also allow to improve the first quality control done on the data. In order to validate and evaluate the products by regional experts, a very simple web page has been designed for loading and presenting the maps. For the global products done at Coriolis, experts check the maps and data on a regularly basis since data sets are sent to modelers (Mercator project).

For the ENA area, the procedure of quality control follows those different steps: topography control (to remove stations whose maximum depth exceeds the bottom sound ± 10%), duplicate control (using a spatiotemporal criterion), statistical and range check. This last check is based on the removal of suspicious data prior to further quality control statistics. In a first inspection, profiles clearly exceeding WOA05 climatological temperature and salinity values were rejected, as they likely correspond to misplaced or wrong data (see following figure 1). In these profiles, the water mass structure varied from the main cluster, probably due to errors in station location or due to salinity biases. The second step of this last check follows the procedure of Lozier et al., and Curry (1996): a statistical analysis was performed in isopycnal bins. In this way, a set of isopycnal bins was defined per 2.5o x2.5 o Latitude-Longitude bins. Rather than applying automatic controls, θ-S curves of all profiles on each of these geographical bins were visualized to detect outliers, out-of-range values or clearly nonsense or unreasonable data. Later and aided by the criterion of ± 2.8 standard deviation with respect to the idealized linear θ-S curve in the isopycnal bin, questionable data was interactively flagged. For profiles without salinity, a similar control was done on isobaric bins.

|

|

Figure 1. Details of the statistical check. Left: An example of suspicious profiles removed by visual inspection (from Kobayashi and Suga, 2006). Right: An example of statistical check for Mardsen square 7303-0. Yellow and red dots are automatically removed during the statistical check. Solid lines and line segments represent the means and the slope of the linear T-S curve, respectively.

Processing

After gathering the selected data, covering the years 1975-2008 for a specific variable related to V1 or V2, the data sets of the Atlantic Ocean were prepared using the Ocean Data View (ODV) software tool to export the data in a common format. Using ODV allowed for checking duplicates between different sources. Quality indicators are used to take into account only good data. Data extracted from Coriolis and Sismer databases take into account quality control done at both centers. The Data-Interpolating Variational Analysis (DIVA, 4.2.1 - October 2010) software is used for the interpolation.

Figure 2. Example of Salinity fields for 1975-2008 at different levels.

The global products are done at the Coriolis data center and the results (Figure 3) can be seen at http://www.ifremer.fr/co/co0525/en/global/. The software used is ISAS V5, developed at Ifremer (LPO).

Figure 3. Temperature and salinity fields for the Global Ocean (from Coriolis website)

The first studied V2 variable is oxygen. Most of the collected data are in the east of the Atlantic Ocean, therefore error standard deviations are higher for the west part of the area (Figure 4).

Figure 4. Oxygen error standard deviation and fields for 1970-2010 at the surface level and oxygen field at 100m for season (January-February-March).

Comparison with WOA climatology for the ENA area

For the Eastern North Atlantic area, data screening extensively used HydroBase tools and techniques whereas data gridding benefited from the Data-Interpolating Variational Analysis (DIVA, 4.2.1). WOA climatology is a widely used product to either initialise or relax ocean models to climatological values. Some comparison is presented between the DIVA-Hydrobase climatology and the gridded WOA05 product.

Whereas the main large-scale thermohaline features of the Eastern North Atlantic are depicted by both products, one regional aspect appears blurred in the WOA fields. The characteristics of embayments such as the Alboran Sea, the Gulf of Cadiz, Bay of Biscay, Celtic Sea and English Channel do not come forward in the WOA climatology. Additionally, aspects related to coastal and shelf oceanography also are obscured in this product, not just because of the larger spatial grid, but also because of the larger smoothing applied in order to remove doubtful values. The convenience of introducing an isopycnal and manual QC technique appears reinforced by the precise definition of frontal zones.

The propagation of salinity anomalies related to the Mediterranean outflow in the Atlantic (Figure 5), particularly in the vicinity of the Azores plateau. This figure illustrates the power of the DIVA interpolation. The WOA defines a diagonal front between the North Atlantic Central Water and the Mediterranean Water. More efficiently, the Hydrobase-DIVA product permits this front to be twisted around the land mass of the Azores plateau, in agreement with synoptic observations.

Figure 5. DIVA and WOA comparison: temperature and salinity at 1000 m for the ENA area.

Authors: C.Coatanoan - IFREMER, R.Sanchez - IEO, M.J. Garcia - IEO

Reference

Kobayashi, T. and Suga, T. 2006. The Indian Ocean HydroBase: A high-quality climatological dataset for the Indian Ocean. PROGRESS IN OCEANOGRAPHY, 68 (1): 75-114.

V1 Data Products from Satellite observations

The SeaDataNet V1 SST satellite climatology and Chl-a satellite climatology are series of gridded monthly mean maps for the whole Mediterranean Sea. In particular, the SeaDataNet (V1-V2) SST satellite climatology and Chl-a satellite climatology (Figure 1) are computed on two basic interpolation grids:

- 1/8 x 1/8 of a degree, for direct comparison with similar climatologies from in situ measurements . The Geo-box is: longitude 9.25W-36.5E , latitude 30N-46N.

- 1/16 x 1/16 of a degree grid currently used for the daily near real time production of optimally interpolated SST. The Geo-box is: longitude 18.125W-36.25E , latitude 30.25N-46N.

V1 Products: Sea Surface Temperature Climatology (1985-2005)

The Climatology was computed from a daily optimally interpolated SST Re-Analysis, produced by CNR-ISAC and ENEA in the Mediterranean Operational Oceanography Network (MOON) framework, using optimally interpolated SST pathfinder data products (Marullo et al. 2007). The data format is NetCDF and follows the CF conventions and the conventions defined within the GODAE-Global High Resolution SST- Pilot Project.

V1 Products: Chlorophyll-a Climatology (1998-2006)

The Climatology calculations were performed using a proper weighting function, chosen after specific tests. A new MED Chl-a dataset has been produced by re-processing all the available Local Area Coverage Level-1A SeaWiFS data (more then 6000 passes, covering the 1998-2005 period) with the MedOC4 algorithm (Volpe/ et al./, 2006). This dataset has been used to compute the Monthly average chlorophyll maps for the 1998-2006 period. The Chl-a concentration data format is NetCDF and follows the CF conventions.

Figure 1. SeaDataNet V1 monthly satellite climatology for SST (left) and Chl-a (right).

These satellite-derived climatological data products are currently published in a THREDDS Catalog: http://fe4.sic.rm.cnr.it:8080/thredds/config/SEADATANET/clim.html and will become available too through the SeaDataNet portal.

V1 Products: SST (1985-2005)

The following products have been generated:

- v1.0 (1/8 degree grid)/ -->SST product version v1, low resolution

- v1.1 (1/16 degree grid)/ -->SST product version v1, high resolution

V1 Products: Ocean Color (1998-2005)

The following products have been generated:

- v1.0 (1/8 degree grid)/ -->CHL product version v1, low resolution

- v1.1 (1/16 degree grid)/ -->CHL product version v1, high resolution

Also the V1 SST and Chl-a concentration climatology have been updated (V2) with data till 2008.

V2 Data Products from Satellite observations

Initially the DIVA algorithm and code was not used for the V1 satellite climatology due to the large amount of data in satellite data sets with respect to in situ measurements, that consequently require specific modifications of the code. Then the DIVA software v4.2.1 was tested on satellite data on SST and preliminary experiments were performed, testing the new DIVA version (4.3.0). This solved the problems related to the large amount of data of satellite data sets and more experiments were done. This has resulted in a new DIVA SST climatology that was compared with the previous one (OI). Several Diva analyses with several different running parameters were performed in order to get the best DIVA SST climatology:

- on a subset of single original SST data maps as DIVA input

- on a subset of single anomaly SST (climatology - original data) data maps. 1) A reference field archive was put in DIVA format. 2) A database of anomaly was created subtracting for each daily maps (in the SST archives) daily. 3) DIVA analysis was performed on this anomaly database. 4) Reconstructed field was then obtained adding the reference field (see schema on fig 5)

- on a subset of multi SST data maps: a single day SST original data and 10 previous day SST original data, with different weights, as DIVA input

- on a subset of multi anomaly SST (climatology – multi SST) data maps

The results were compared with the SST climatology obtained with CNR-GOS optimal interpolation, defining the most correct set of Diva parameter in order to get the best interpolated SST map.

This new V2 new SST climatology will soon become available for downloading for users.

Figure 2. DIVA images layout: 09/01/2006 SST satellite data on the DIVA mesh.

Figure 3. DIVA interpolated data map (left) and error map (right), 09/01/2006 SST data.

Figure 4. The OI interpolated data map (left) and error map (right), 09/01/2006 SST data.

Work done by CNR-ISAC-Rome with DIVA with SST DATA

CNR tested the grid environment in order to overcome timing problems in producing interpolated sst data with error maps. It prepared climatological data in order to use them as reference field in DIVA. It created a database of anomaly (1998-2006): subtracting for each daily maps (in the sst archives) the right climatological maps. Finally CNR performed several Diva analysis with several different running parameters. The figures 5 and 6 illustrate the process flow.

Figure 5. CNR process flow 1

Figure 6. CNR process flow 2

Results:

When Diva runs over a single field, it substracts automatically (as first guess) the mean field calculated directly by the data. When it runs over an Anomaly field, a user must tell DIVA not to subtract any first guess. The two results (analysis with field or anomaly) seem quite similar which indicates that the first guess are a good approximation of the Mean field. This is llustrated in Figure 7.

Figure 7. Comparison between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a single day map -1.1 - and by single map reconstructing by the anomaly)

Figure 7. Comparison between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a single day map -1.1 - and by single map reconstructing by the anomaly)

Adding information, data from the previous maps, the Diva reconstruction seems better in some points, worse in other points.

Figure 8. between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a multi day map -2.1 - and by multi map reconstructing by the anomaly)

Figure 8. between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a multi day map -2.1 - and by multi map reconstructing by the anomaly)

It can be concluded that error analysis and Interpolated maps with DIVA are comparable to OI error fields.

Author: C. Tronconi - CNR

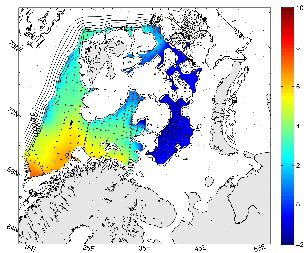

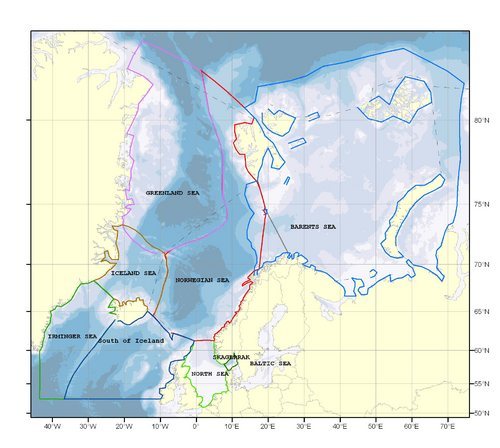

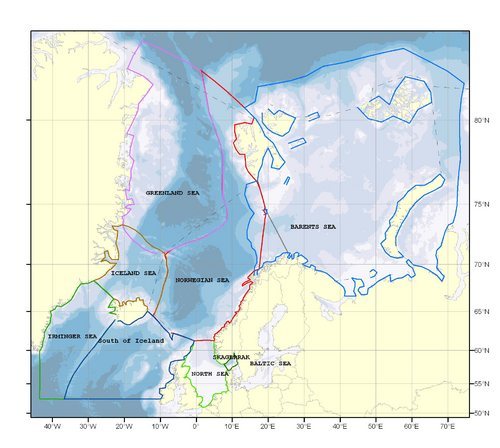

The Arctic and Northern Seas work package includes 8 subareas, Barents Sea, Greenland Sea, Norwegian Sea, Irminger Sea, Iceland Basin, Iceland Sea, North Sea, and Skagerrak. It has been difficult to extract data that cover all these areas. Three areas has had focus, Barents Sea, Norwegian Sea and North Sea including Skagerrak. Buffer zones between the areas have been identified. The work has been carried out jointly between IMR and MUMM. Figure 1 shows an outline of each area.

Figure 1, Study Area

In the Barents Sea two products have been outlined, one temperature and salinity monthly climatology with seasonal background field, and one hydrographic atlas on temperature and salinity. The climatology has used the ODV software for preparing data, mainly extracted from the World Ocean Database 2009 (WOD) and the DIVA software for the whole production of the product leading into a 4D netCDF binary file for each parameter analyzed.

WOD select was used to prepare data on temperature and salinity from the whole area of interest. The data sources chosen was CTD casts, bottle stations, XBTs gliders in the timeframe from 1960 to 2008. Data was extracted into standard IODE depths and some additional depths due to special needs in some areas. Depths in use are for the Norwegian Sea, 30 layers, 0, 10, 20, 30, 50, 75, 100, 125, 150, 200, 250, 300, 350, 400, 450, 500, 600, 700, 800, 900, 1000, 1100, 1200, 1300, 1400, 1500, 1750, 2000, 2500, 3000, for the Barents Sea, 18 layers, 0, 10, 20, 30, 40, 50, 75, 100, 125, 150, 200, 250, 300, 350, 400, 450, near bottom. Data for the North Sea including Skagerrak where extracted from the data sources at WOD and ICES. The IODE standard depths where used. The two resulting files were stored in the ODV 4 format directly ready for the DIVA analyses software.

Before running the analysis the ODV software was used to remove duplicates. In the huge file from the entire area, containing more than 1.3 million profiles, approximately 600.000 duplicates were removed resulting in a file with 750.000 profiles. The file from North Sea and Skagerrak contains approximately 325.000 profiles.

Figure 2 Triangular mesh at 250m

Figure 2 Triangular mesh at 250m

The data sources for the hydrographic atlas was basically the same, but only making use of Norwegian and Russian data due to the fact that a comparison with a former product from 1997 was needed. The resulting file contained approximately 200.000 profiles. The used mesh is shown in Figure 2. A thorough process of quality checking was performed on this file. The Atlas was setup to run on seasonal basis in the time period 1970 - 2008, giving results on each single year. Due to lack of data in two seasons only a spring season (Feb, Mar, Apr) and a autumn season (Aug, Sep, Oct) where approved for analysis.

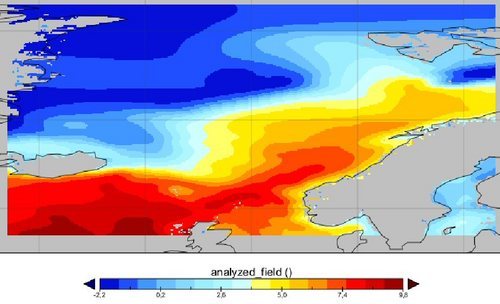

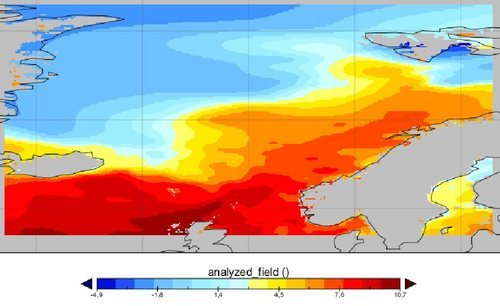

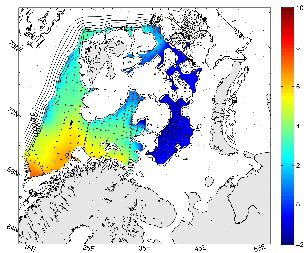

Several considerations were made to choose a best possible result for the Atlas, a specific depth in specific year in autumn is shown in Figure 3. Make use of the reference field or not; using reference field resulted in a smoother Polar Front. Make use of mean background field or not; using mean background was not reasonable in all filed, but gave a sharper Polar Front.

Figure 3 Temperature 250m autumn 1999

Which error estimate to choose, poor man's estimate or full error estimate; using full error estimate was too time consuming, a factor of 1 to 40. Make use of advection field from topography; using advection field gave a sharper polar Front, but the correlation length increased everywhere and need subjectively to be reduced. The conclusion was to run the atlas with mean background field, poor man's error estimate and no advection field.

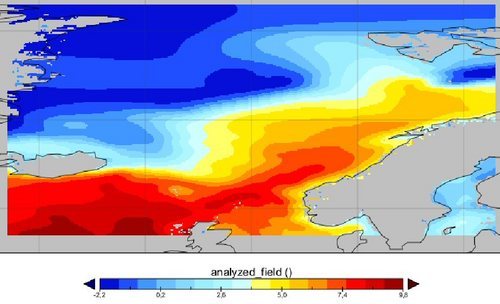

The climatology product has monthly climatology's in all depths, number of layers/depths depending on the area for the chosen time period. Example plots are given in Figure 4,5 and 7 showing temperature at surface level and Figure 6 at 3000m in the Norwegian Sea.

Figure 4 Temperature reference Dec,Jan,Feb

Figure 5 Dec Temperature at surface

Figure 6 Dec Temperature at 3000m

Figure 7 Dec Available data at surface

Author: Helge Sagen - IMR

The present EU SeaDataNet project officially runs till end March 2011 whereafter all reports have to be finalised. Although SeaDataNet has developed the foundations of a well-structured infrastructure, it is not yet ready and sustainable. Moreover, there are many challenges and recent innovations which need further development, implementation and operation.

Therefore in November 2010 a proposal for SeaDataNet II has been submitted to the EU FP7 Research Infrastructures programme in response to a dedicated Call. The overall objective of the SeaDataNet II project is to upgrade the present SeaDataNet infrastructure into an operationally robust and state-of-the-art Pan-European infrastructure for providing up-to-date and high quality access to ocean and marine metadata, data and data products originating from data acquisition activities by all engaged coastal states, by setting, adopting and promoting common data management standards and by realising technical and semantic interoperability with other relevant data management systems and initiatives on behalf of science, environmental management, policy making, and economy.

A number of the specific objectives of the SeaDataNet II project are:

-

Achieving more metadata, data input and data circulation from other relevant data centres in Europe by further development of national NODC networks, thereby promoting and supporting adoption and implementation of SeaDataNet standards, tools and services.

- Achieving data access and data products services that meet requirements of end-users and intermediate user communities, such as GMES Marine Core Service (e.g. MyOcean), WISE-Marine (EEA), regional marine conventions (OSPAR, HELCOM, Black Sea Commission and Barcelona Convention), establishing SeaDataNet as the core data management component of the EMODNet infrastructure and contributing on behalf of Europe to global portal initiatives, such as the IOC-IODE - Ocean Data Portal (ODP), and GEOSS.

- Achieving full INSPIRE compliance and contributing to the INSPIRE process for developing implementing rules for oceanography

- Achieving an improved capability for handling also marine biological data and interoperability with the emerging biodiversity data infrastructure in close cooperation with actors in the EurOBIS, MarBEF, and LifeWatch initiatives

The work plan for SeaDataNet II is organised as a cycle of activities that pass from operation to development to operation. This is indicated in the figure below that also highlights the various activities that are part of the work plan. A very important aspect is that new services, components and standards must be implemented over the whole network and without causing disturbances in the operational functioning of the infrastructure. This will be achieved by versioning of services, parallel installation and testing before moving to production, and careful coordination of upgrades implementation.

The proposal has been reviewed by the EU with the help of external experts. Recently we have received a favourable evaluation report and therefore we very much expect to be invited for negotiation with the EU. This would make a further development of the SeaDataNet infrastructure, its data coverage and its function for society possible. In the meantime the SeaDataNet partners guarantee the operation and maintenance of the present SeaDataNet services.

Figure 7. Comparison between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a single day map -1.1 - and by single map reconstructing by the anomaly)

Figure 7. Comparison between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a single day map -1.1 - and by single map reconstructing by the anomaly)

Figure 8. between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a multi day map -2.1 - and by multi map reconstructing by the anomaly)

Figure 8. between single OI interpolated Map (1.2) and the interpolated map with DIVA (starting by a multi day map -2.1 - and by multi map reconstructing by the anomaly)

Figure 2 Triangular mesh at 250m

Figure 2 Triangular mesh at 250m